Design for Real-Time Control: Embedded Computing on Multicore Processors

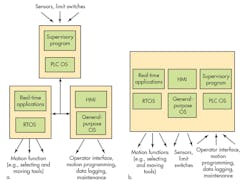

Examine a computer-controlled machine built in the last 20 years and you’ll note a common design practice. The machine’s real-time control functions, its vision system (if there is one), and its human interface are all on totally separate computing platforms.

Today, machine designers are increasingly getting away from this kind of computing topology. They are consolidating the functions once spread among several processing units onto one computing platform. This approach eliminates multiple components such as power supplies, memories, and chassis while cutting the cost of manufacturing and engineering development.

This file type includes high resolution graphics and schematics when applicable

As an example, consider a CNC machine making metal parts. Separate computing elements — a motion system, a human interface/general-purpose processor, and a supervisory programmable logic controller (PLC) — traditionally would handle its functions. Combining these computing workloads onto a single platform with multiple cores potentially can save a lot of development time and reduce the cost of the machine.

The consolidation of computing tasks also makes better use of new multicore processor chips by distributing processing functions among available cores. Configuring machines this way also lets OEMs provide new cost/performance options by adjusting the number of cores on the machine’s processing platform. Thus, OEMs may be able to add features such as security applications and machine-to-machine (M2M) connectivity simply by substituting a processor chip that includes more cores without any hardware redesign.

But this consolidation of processing tasks poses challenges. The processing platform must have an operating environment that supports a wide variety of workloads. For example, some of the processor workload is in handling functions that require determinism: the predictability of real-time event-driven responses (i.e., responding to switch closures or ticks of a time clock). Other tasks in the workload may involve responding to inputs from human operators that are less time critical or arise from enterprise-level data acquisition and logging operations that both have large time windows.

Moreover, processor consolidation can only be financially attractive if it supports legacy software without a lot of recoding.

Other issues relate to a need for different operating systems (OSs). A multicore processor handling a mix of tasks once divided among multiple processors potentially may need to run different OSs simultaneously, such as Windows or Linux for general-purpose processing and a real-time OS (RTOS) to handle machine control. (Microsoft Windows takes advantage of multiple processor cores, but it cannot support hard real-time applications.)

Several OSs can cause problems. Standard OSs such as Windows and Linux typically are not designed to operate with others on the same platform. Each OS assumes it has complete control of the underlying hardware. There will be conflicts when two OSs try to access shared resources simultaneously.

So, one can’t simply load a handful of OS copies onto a multicore processor chip without building in a way to avoid conflicts. The solution is to employ a technique that makes each OS “think” it has complete control. In reality, special software sets up the system and intervenes when necessary to avoid conflicts. This is what virtualization is about.

Virtualization Techniques

The need to run different software environments simultaneously on the same platform motivates all forms of virtualization. But the term “virtualization” gets used so loosely that many engineers don’t appreciate the technical issues behind specific implementations. The fact is that not all virtualization approaches will work for machine control.

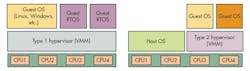

Virtualization generally takes place through software called a hypervisor. Hypervisors are software layers that run underneath OSs and create multiple “virtual machines” (VMs). Guest operating environments can run on these VMs, which control access to the physical resources of the processor, ensuring the guests don’t conflict with one another.

A hypervisor can provide for standard communications services (TCP/IP and COM) between nodes. This eases the integration of different applications and OSs that otherwise would require shared memory approaches, which can be relatively complicated.

Though hypervisor technology can consolidate software environments, not all hypervisors work the same way. Variations can force trade-offs in adaptability and cost. Also, some software architecture approaches support real-time control better than others.

Approaches to virtualization generally get categorized as either Type 1 or Type 2. Type 2 hypervisors and full virtual-machine manager (VMM) solutions include VirtualBox and VMWare. Typically, they operate with a conventional host OS environment. They model each of the underlying processors in software and control the execution of tasks and I/O access so guest OSs can’t conflict with each other.

These hypervisors were designed for and are generally applied to IT-type problems such as letting multiple database or security programs reside on the same server. Such applications don’t need deterministic execution in the guest operating environment.

Type 1 “bare-metal” hypervisors run directly on the processor hardware and don’t rely on a host for services. Hypervisors in this category include KVM and Hyper-V. Similar to full VMMs, they provide a complete PC operating environment, making them easy to use. But using a Type 1 hypervisor does not guarantee support for determinism; they’re not designed to respond to real-time events in a predictable amount of time. The lack of real-time responsiveness disqualifies them for use in embedded-control applications.

However, some Type 1 hypervisors are deterministic and can support real-time processing. They use the Type 1 hypervisor approach, but also provide what is called guest-to-core affinity. This term refers to real-time software running on a guest RTOS that can interface directly with the underlying processor hardware. Virtualized services are provided only where absolutely needed.

This type of hypervisor supports a special type of virtualization that the real-time computing community has come to call “embedded virtualization.” It refers to an operating environment that lets real-time software and nontime-deterministic applications run simultaneously on the same machine.

An optimal embedded virtualization approach ensures the real-time guest retains its deterministic capabilities. When properly implemented, it also supports legacy RTOSs and general or proprietary OSs without the need for modifying their code.

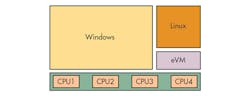

One hypervisor in this category, TenAsys’ HaRTH, is a hard real-time hypervisor running inside the company’s INtime RTOS. Here, the term "hard real-time" typically applies to control functions where decisions must be made reliably in a matter of microseconds, compared to control applications where millisecond-level responsiveness is adequate. When HaRTH is configured to support the hosting of a single guest alongside Windows, the resulting product is called eVM for Windows.

Most other embedded hypervisors are built to support a more narrow set of guests. They use techniques called para-virtualization to simplify the services they must provide. The guest OSs must be modified to use proprietary interfaces with the hardware that they run on.

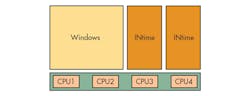

Para-virtualization is an effective way to improve some type of guest operations. But it limits how well the hypervisor can adapt to modified and, most often, proprietary RTOS and general-purpose OS guests. Para-virtual hypervisors provide typical partitioning services on hard disks and offer core affinity so a given process or code thread will only execute on a specific core. Yet para-virtualization options require the guest to cooperate directly with the hypervisor on which it runs.

The multi-OS environments that run the quickest employ explicit hardware partitioning. In a Windows-based system, this partitioning process involves modifying the base RTOS to work with the Windows environment on the same platform. Partitioning takes place explicitly with the help of standard Windows application program interfaces (APIs).

Both real-time and nonreal-time environments run natively on the associated CPUs. Virtualization issues don’t affect either OS because there is no hypervisor that runs when hardware events occur. Windows runs natively, so there’s no violation of Microsoft licensing restrictions when running embedded versions (e.g., Windows Embedded Standard 7) on a virtualized platform.

Understanding Trade-offs

The choice of hypervisor involves tradeoffs among performance, extensibility, flexibility, ease of use, and the amount of legacy content you want to preserve. The use of a hypervisor is advisable in situations that can benefit from best-in-class software on multiple OS environments.

Hypervisors can handle multiple guests of different types. This ability comes in handy for network connectivity by balancing the needs of deeply embedded systems with new capabilities via Internet connections. For example, enhanced system security and simplified user interfaces can be added while keeping down software development costs and time to market.

All in all, a para-virtualized solution (requiring modifications to guest software) could cause problems if the machine must evolve. New software emerges continuously, and future versions of products could benefit from processors with more cores.

The requirement for preserving legacy code is another issue. The problem of reliably supporting legacy content has forced many OEMs to delay their use of modern multicore processors. On the other hand, sometimes porting an application from a legacy environment to a new one is acceptable. Here, the approach that will perform best will entail special programming to explicitly partition the hardware so it will handle general-purpose OSs and RTOSs. It also can save substantial development costs if done in familiar programming environments.

Different solutions provide different levels of support for multicore processing. Some are more complicated than others. Goals and priorities dictate which approach is best.

About the Author

Kim Hartman

TenAsys Corp. Vice President Kim Hartman has served the embedded market with hardware analysis tools and RTOS products for 30 years, first at Tektronix and then at RadiSys Corp., before co-founding TenAsys Corp. in 2000. He is a computer engineering graduate of University of Illinois, Urbana-Champaign, and received his MBA from Northern Illinois University.