I’ve written before about my feeling that augmented reality (AR) is the next great futuristic tool for engineers. AR gives engineers the ability to visualize data while working with their product or assembly onsite. There have been several notable advancements in this tech, what with the increased use of HoloLens and the development of voice-controlled AR headsets.

As a self-professed comic book nerd, what I’m really looking for are Tony Stark/Iron Man levels of integration technology. And recently, at Solidworks World, I found the closest thing yet to that reality in the form of Meta AR.

The Meta 2 is the new updated headset that allows users interact directly with their models. It has an increased field of view, enabling users to visualize designs, models, and data across a variety of scales (including full-scale).

What is Meta AR?

The first Meta AR prototype was developed at Columbia University by founder and CEO Meron Gribetz. He attached an off-the-shelf sensor bar to a see-through mounted display. It combined see-through optics, sensor tracking, and gestural interactions. After a successful Kickstarter campaign in 2013, the Meta 1 headset was created.

Meta 2 is the new updated headset that I experienced at Solidworks World, which has an increased field of view—enabling users to visualize designs, models, and data across a variety of scales (including full-scale). The new Meta 2 has an optical engine with a 90-deg. field of view and a resolution of 2,560 × 1,440.

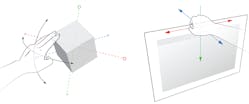

Meta’s headset has real-time tracking and mapping of spaces in the user’s environment. Algorithms can lock content and holograms in 3D space, and the headset tracks inputs via a gesture-based, intuitive hands-tracking system.

The Meta 2’s optical engine delivers the highest-resolution stereoscopic of 3D images on the physical world at 20 pixels per degree, which ensures text readability. Meta’s computer vision uses algorithms that enable Simultaneous Localization and Mapping, or SLAM. This lets Meta have real-time tracking and mapping of spaces in the user’s environment, and the algorithms can lock content and holograms in 3D space. The headset features a gesture-based, intuitive hands-tracking system that enables users to interact with holographic content in a natural way.

At Solidworks World, Meta AR announced that it’s the first company to offer 3D CAD viewing capabilities in AR for Solidworks. With Solidworks “Publish to Xtended Reality” capability, users can export a CAD model to an open-source format, known as “glTF,” used on the Meta 2.

The exported file retains key information:

- Display states

- Materials/colors

- Animations (such as exploded view animations, motion study, etc.)

- 3D model hierarchy

AR integration between Solidworks and Meta enables a simple and more natural design visualization.

Test Drive of the Meta AR

David Gene Oh, head of developer relations at Meta Co., let me test drive the Meta AR. Once you place the headset on, the first thing you’ll notice is the impressive field of vision; other headsets have a tendency to block peripheral view. The interactive features are very natural. Hand gestures (e.g., opening and closing a hand) enable users to select a model to be displayed or different control options.

The motorcycle and human heart models are the two that impressed me the most. With the motorcycle, you were able to view the internals of the bike, change the exterior features (such as color), view it in full scale, and walk around it. The heart model was functional, and I could increase the model by grabbing the model with both hands and simply pulling apart. The pumping action of the heart was also viewable in cut view, providing an internal view that a medical professional would find useful.

David Gene Oh, head of developer relations at Meta Co., is demonstrating how one interacts with the models viewed through the headset. While we see an empty table, David is seeing the model, visible on the computer behind him.

For a designer, the ability to import CAD models directly into the AR interface is extremely useful. Previous AR translations would require model prep prior to exporting to the headset—but if you can render it, you can display it on Meta AR. The only limitation is that the headset requires a cable connection to an adequate computing source to render the high-resolution images. This was done on purpose by Meta AR as their customers were more concerned with clarity of the models and not wanting to compromise on field of view or graphics.

This has more to do with the state of AR and VR headsets. Wireless headsets are limited in graphics, but offer mobility. Yet, in my opinion, the future of these headsets is bright. Sooner rather than later, a high-resolution headset like Meta 2 will become wireless as computing power becomes smaller, and data transmission like 5G become mainstream.

Initial access to the private beta program will be by invitation only. Those interested in participating can express their interest here.