At the recent Consumer Electronics Show (CES) 2018, augmented reality and virtual reality headsets stood out as some of the star attractions. As reported by Bill Wong, technology editor for our sister brand, Electronic Design:

Apple and Google released AR software development kits (SDKs) for their platforms. Microsoft has also been pushing its Windows Mixed Reality (MR) based on technology developed for its HoloLens. A number of mixed-reality headsets support this software framework, including devices from HP, Dell, Acer, and Samsung.

Mixed reality branches the augmented and virtual reality spaces by attempting to anchor the virtual models in real space. By using input cameras on the headset devices, the purpose of mixed reality brings the virtual models into an interactive augmented real space. The input cameras scan the surrounding environment, which eliminates the need for outside cameras or infrared beacons to track the movement of the user.

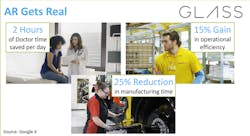

According to CES, Google Glass helped users in the medical and manufacturing industry be more efficient and manufacturer products faster.

According to CES’ trends and predictions for 2018, the visual wearable field is expanding. In 2017, Google Glass was a prime example of how headsets can help those in the workplace. In the manufacturing industry, production workers who used the glass saw a reduction in manufacturing time of 25%, along with a 15% increase in operational efficiency. The next stage of mixed reality headsets is to introduce computer voice assistants. Two headsets stood out to me as the next stage of MR design tools.

The Vuzix Blade are a pair of mixed-reality glasses that will have Amazon’s Alexa built in, offering users voice assistant software they can interact directly with in natural human speech.

Vuzix introduced its new MR headset, called Blade, which is powered by Amazon’s voice assistant Alexa. The glasses have a built-in camera, microphone, and side-mounted touchpad. They can pair with iPhones or Android phones and will work with prescription lenses. Blade works as a standalone headset and can be connected to the internet via Wi-Fi, or else through the paired device.

The glasses will mirror notifications and display photos and videos from the paired device. It has a battery life of anywhere from 2 to 12 hours depending on use. The heads-up display is only located in one eye as to not completely obstruct a wearer’s view. The user can pull up a web browser or take photos, and the glasses have the simple advantage of feeling like a normal set of glasses. With Alexa, users will be able to speak their commands into the headset directly with normal everyday speech.

Rokid is building upon its hardware from last year’s CES by introducing its AI Melody software into a pair of mixed reality glasses.

The Rokid Glass is another example of voice assistant-enabled eyewear. At last year’s CES, Rokid introduced its voice assistant home device, the Pebble, which used its own artificial intelligence (AI) software called Melody. Now, Rokid has introduced Melody into their MR eyewear to serve as its navigating tool. Rokid Glass will be a standalone headset, not needing to be tethered to another processing device. It will have its own power source and incorporate an internal processor, while using Bluetooth and Wi-Fi to communicate with a smartphone when necessary for additional processing power and internet connection in areas without a strong local network.

According to Rokid, “[Melody will be] available on all types of IoT devices, including a wearable like [the] Rokid Glass, user-specific data from not just static, but also out-of-home portable devices like wearables can be observed and analyzed for improved and personalized recommendations.”

Companies like Siemens and Rockwell Automation have introduced heads-up displays with MR headsets that allows users to see current data on their operational systems and view CAD models on the factory floor. The limitation I find using these headsets is that the controls are not refined. They involve awkward hand gestures or very limited vocal commands. By introducing visual assistants and AI into the headsets, users can interact with the headsets naturally and voice their commands clearly and directly with natural speech.

I believe that MR headsets are the design tool of the future, especially for users on the factory floor that require on demand information to make decisions. These headsets need to become smarter so that they actually help users before widespread adoption.