Machine Vision Lets People Get Close to Robots

This file type includes high resolution graphics and schematics when applicable.

Proximity sensors, cameras, and software are working together to give manufacturing equipment environmental awareness. By sensing the environment and communicating through software, automation equipment reacts and makes decisions on its own. Consequently, machines can fit into areas once thought impossible and work closer to human workers than before. This allows for continuous work flow without an internet connection or machine-to- machine communication.

Some automation equipment will have a pass/fail programming. This means once something registers as a “fail,” the machine stops and waits for a worker to correct the problem. A person can see simple failures and fix them. Something as simple as a part being in the wrong orientation could stop production. If this ability to see could be replicated by a machine, there could be a large reduction in stopping a manufacturing line, thus saving time and increasing production. In one example, a dynamic single part flow with a Universal Robot cut production cycle time from 28 to 17 hr. with sensing techniques.

One way vision is being added to machines is with vision sensors and smart cameras. The lines are blurred as to the difference between them, but generally, vision sensors tend to be simple packages while smart camera are more complex and offer more capabilities. For clarity, both are referred to as cameras systems in this article.

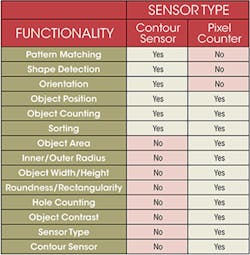

Two techniques for machine vision are contour sensing and pixel counting. When sensing the contour of an object, the camera system inspects an object by analyzing its shape and comparing it to similar known geometries. The pixel counters analyzes an object’s area by counting pixels. This technique also indicates shades for part matching, inspection, and orientation. Many pixel counting camera systems have as few as 0.3 megapixels. Fortunately, many processes do not require high resolution. Even with low resolutions a cameras 2D image is able to register darker pixels as shadows or an alteration in the shape of a part to determine if a part is tilted or not sitting properly.

Camera images are two dimensional, offering an x and y axis. If a third dimension is needed in a process, it can be added in several ways. Multiple cameras are one way to add a third dimension and more capabilities. Some systems use four or more cameras to improve production speeds by validating measurement, tolerance, and other specifications.

Complex geometries are being accurately measured by use of more than one camera. In one application, for example, steel plates needed dimensional tolerances of 0.002 in. (0.05 mm) and flatness had to be accurate to 0.001 in. (0.03 mm). And all values collected on an assembly line and documented. Engineers ended up using four CCIR cameras (Comité Consultatif International des Radio communications—a monochrome video standard with 625-lines and a frame rate of 25 frames [50 fields] per second), and an IN-IMAQ camera that located and read bar codes for documentation.

Using multiple cameras takes advantage of angles to derive basic information, such as height, width, radius, and distance between features, by trigonometry and algorithms. Using multiple cameras at different angles is popular with hand held devices and 3D scanners. However, this does not mean single-camera systems are old news.

Time of flight uses a single camera to find valuable information. The time-of-flight measurement bounces light off the object and calculates the distance to the object by multiplying velocity by time, then dividing the result by two to get the distance to the object. This is a common industry practice for adding a third dimension and extra value to single-camera systems.

Camera systems can cost thousands of dollars, so justifying their use is essential. “We have cameras for many applications that would not be economical with a plug-and-play camera system,” explains one engineering manager from Glidewell Laboratories. “The software is the bulk of the cost. There are open source computer vision libraries, so you don’t need a dedicated software developer on staff. Anyone who knows C++ could use open source software and program an industrial camera, saving thousands of dollars for the company and getting a better return on its investment.”

A pick-and-place operation using Fanuc’s LR Mate 200iD robot teamed with Fanuc’s iRVision was developed to show the value of cameras. The iRVision locates parts in a 2D space. Then, a camera mounted to the tool on the robot’s arm gathers information on orientation and location so it can pick and place parts without assistance. A system like this can pick and place parts regardless of its orientation and place precisely on an assembly line. This could reduce the need for advanced sensing equipment downstream.

This file type includes high resolution graphics and schematics when applicable.

Freeing Up Time

This file type includes high resolution graphics and schematics when applicable.

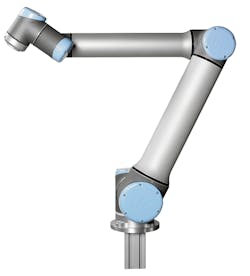

Another application of robots that have machine vision is freeing employees to focus on more complex tasks. For Glidwell Dental milling dental crowns need to be reloaded every 10 minutes, but by using Universal Robot’s UR5 arm this time has been expanded to two hours. It also can keep production moving when an error is registered. If a dispenser is empty or jammed, the UR5 arm will continue working on another crown operation and alert a technician.

For high-speed applications, the frames per second and the speed at which the machine can process pixels needs to be considered. This is similar to selecting the frequency and processing speed of a sensor for high-speed applications. The processing speed limits industrial cameras to fewer megapixels. Too many pixels will slow the processing to the point where data is unreliable. The demand for higher speeds has led to higher speed cameras operating on a complementary metal oxide semiconductor (CMOS) over a charge-coupled device (CCD). CMOS tends to feature lower resolution and faster speeds with low sensitivity. CCDs are known for high resolution but slower speeds, and can be more sensitive than CMOS cameras.

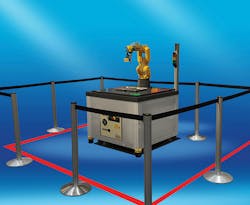

Cameras, lasers, and proximity sensors not only let machines interact with an assembly line, but they can add important safety features as well. With all the information from the cameras, robots and can operate with a higher level of autonomy. By using this same technology to sense the surrounding areas this system is being used as a safety device. This can minimize or eliminate caging, allowing technicians to work side-by-side with automated equipment.

UR and Fanuc have designed robots that can operate with minimal to no safety caging. Some of them are small robotic arms, with 20 to 40 in. of reach and maximum payloads of up to 10 lb. The small size and lack of cages lets these robots be used in small spaces making it easier to retrofit them into assembly lines.

The technology takes advantage of machine vision for a technician to work closely with automation equipment. These devices are being called collaborative robots. An example is the six-axis robotic arms from Universal Robot (UR) can weigh as little as 25 lb., with a reach of up to 51 in. and repeatabilities of ±.004. They quickly can move even microscopic parts and watch out for workers while performing tasks.

Some Fanuc robots use some of the same technologies for sensing parts in its dual-check fenceless zone to determine if a worker is too close. These types of robots have a series of area scanners deployed around them to create a barrier or virtual wall. The robot can be programed to slow down when something enters the area to ensure the safety of factory workers. Users can establish different safety zones: As a worker nears the robot, it can slow down; if the worker gets closer, the robot will come to a complete stop.

The software not only controls speed, but can limit the robot’s travel. If a person is sensed in a specific zone, the robot could be programed to continue working in different spaces until that zone is cleared. By using some of the same sensors and cameras, robots can be used in applications limited by space and worker safety concern.

UR keeps workers safe around its robots letting the machines detect any resistance in the moving it arms. “A change in torque can indicate a robot has run into something,” says Tom Moolayil, UR’s technical manager. “However, to have a dedicated force and torque sensor on every joint could cost $5,000 to $7,000 for each axis. That is close to the price of one of our smaller system at about $23,000.”

“We use dual encoders on every joint to measure the electric current. If there is a change in the current across any of the joints, the robot stops. This is a cost effective way to keep people safe.”

Moolayil finished by saying, “Giving a machines sight not only provides a valuable closed-loop system that offers environmental awareness, it may also lead to new lighting design in manufacturing plants. Investing the money in a vision system that cost thousands may not receive the return on investment if poor lighting inhibits its performance.”

That being said, a new technology may not need proper lighting to operate. Olea Systems’ OleaVision uses Doppler radar transmitting 5.8 GHz to detect objects and doesn’t need any light. Detecting animate and inanimate objects at about 16.5 feet away the Doppler based system can detect movement of about three-fourths of an inch. This means even if a worker stops moving in a dangerous zone the machine vision will still see them if they are breathing.

For automotive companies, Doppler sensing can be used to indicate people and other moving objects around the vehicle that may be good for buses and vehicles with large blind spots. It can sense inside the car as well to make sure children and animals are not forgotten about. Finally, Olea presented a view of an efficient smart home to better control HVAC and lighting. This technology was built with the Internet of Things and Machine to Machine in mind.

By using some of the same technologies in sensing parts to sense the surrounding environment, automation companies can minimize or eliminate caging to save money. Safety features in robots also can reduce or eliminate the need for back-up safety features, saving more money while simplifying the system. With new technology companies are taking sensing people to new heights; keeping workers safe on and off the job.

This file type includes high resolution graphics and schematics when applicable.

About the Author

Jeff Kerns

Technology Editor

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: