Reliability tests are a crucial part of the manufacturing process, one that ensures your product meets the quality standards your customers expect. All the same, designing good reliability tests is tricky because there are so many elements to consider. Here are some key factors to keep in mind

Repeatability

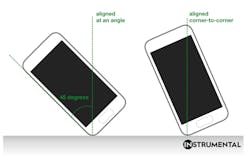

Any good test has to be repeatable. You use reliability tests to make decisions between multiple possible designs or processes, so you need to trust that the methodology will produce results you can trust. Repeatability is achieved by careful specification of as much of the test procedure and setup as possible. For example, take the drop test performed on a smartphone. When performing a drop test, define the “corner drop” orientation exactly for your product, so that even if a different technician is running the test, you can get the same result.

Quantity

There are a few ways to determine how many units to test, and it really depends on the acceptable level of risk at the organization. One engineering adage is that 32 is the magical number. This is a reasonable sample size to draw conclusions around statistical significance. Typically, this number is on the large side for a single configuration in a single build—usually you can pool multiple configurations to get to a number near this one.

Another rule of thumb is that any important tests should be done on at least 10 units. At a very high level, put your units through Shedletsky Test #6: 25% of the units created during the build should be destructively tested. If you are not, ask why. If the answer is money, then simply make it a deliberate decision to prioritize cost over quality. You can also be clever with the testing waterfall design to minimize the data you collect for the investment.

Waterfall

Say you have to make do with fewer units than you would like. Set them up so all of the tests are running in parallel. You can also waterfall: Do an initial test of all of the units, then take the surviving units and put them through the next test. You can run 2-3 more tests before you get to the end. This reduces the number of units you need to make to get the same amount of test data. In addition to saving on unit cost, it can actually give you a more realistic idea of how the product performs. By starting with a thermal test to age the assembly before doing a drop test, you wind up testing on a product that’s likely nearer to what will be its real-world condition.

Risk Management

Figure out what’s the riskiest reliability issue for your product. Make sure that you get a healthy number of units through that test, at each of the builds by including it in its own waterfall as well as at the end of other waterfalls. For example, you might focus your efforts on drop testing if your product is a smartphone. Your top issue may be to make sure the glass does not crack when dropped.

Track Results

Usually a reliability team or the factory will do the testing and provide a report. Always set up ahead of time how you want to receive test results from the factory. Go as far as making your own template to make sure that you are tracking what you care about. One best practice is to use a spreadsheet with a tab for each test. Track the product by serial number, track when the failures occur, and summarize result statistics at the top of the sheet. Don’t sign up for a daily e-mail with test results in lieu of a master spreadsheet. You need to be able to scan across all of the testing and see a holistic picture of passes and fails.

Build Pacing

Make sure that there are two weeks of buffer time in between the end of your engineering validation test or design validation test build and the last date to kick off tooling modifications for the next build. This is an area that is easy to set up correctly, but difficult to hold onto as the builds get going. You need to be able to roll changes in based on your reliability test learnings. The buffer time is important for incorporating learnings from the test into your product, and helps keep your team from being too scattered.

For a bundle of helpful material on reliability testing, check out the free Instrumental Reliability Test Kit download, an extensive list of reliability test definitions and setup instructions. We also discuss the best practices for some of the most commonly tested scenarios, and offer efficiency tips for minimizing units tested and maximizing data.

Anna Shedletsky is the CEO and founder of Instrumental, a manufacturing data company that uses machine learning to find anomalies on consumer electronics assembly lines.