“Audition” in Latin means the power of hearing or listening. Researchers in Japan are now trying to bring audition, or power of listening, to robots.

“Robot Audition” is a research area proposed by Professor Kazuhiro Nakadai of Tokyo Institute of Technology and Professor Hiroshi G. Okuno of Waseda University in 2000. Prior to their research, robots did not have the ability to recognize voice unless it was transmitted directly via a microphone. Building “ears” for a robot requires a sophisticated approach, combining several areas of technology into one cohesive system.

Making Robots Listen

The HEARBO, a hearing robot from Honda, uses HARK open-source software to recognize and identify different sounds that are occurring simultaneously. (Courtesy of Honda)

The entry barrier for robotic listening research was high. It combined signal processing, robotics, and artificial intelligence (AI) into one group—and back in 2000, some of those focus areas, especially AI, were in their infancy. One move that benefited Nakadai and Okuno was to turn their research public and make it open-source software. This helped generate interest and diversified the research, growing it to the point that the research was officially registered in 2014 by the IEEE Robotics and Automation Society, one of the largest communities in robotic research.

Three key technologies are required in making robotic ears. The first is to develop sound-source localization technology, which would enable the robot to estimate where the sound originates. The second is sound-source separation to extract the exact sound direction. The third is automatic speech recognition to recognize and differentiate separate sounds from background noise. The team pursued their research in real environments and in real time.

The “robot audition” software has been named HARK (HRI-JP Audition for Robots with Kyoto University). HARK has been updated every year since its release in 2008, and exceeded 120,000 total downloads as of December 2017. The software was extended to support embedded use while maintaining its noise robustness.

Listening Robots and Drones

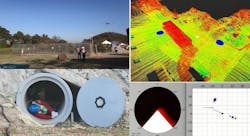

The rescue drone uses a 16-microphone array in lieu of other sensor technology to locate—via sound—victims in hard-to-reach areas with limited visibility. (Courtesy of Tohoku University)

Several engineering teams have already used HARK in projects. For example, Honda’s Research Institute leveraged HARK to create HEARBO (short for Hearing Robot). Their line of research is called “computational auditory scene analysis.” HEARBO can listen, distinguish, and analyze multiple sound sources occurring simultaneously.

The strength of HEARBO’s technology is its ability to analyze, sift, and sort different overlapping sounds. It can distinguish between children playing in the room as a doorbell rings within the same room. The robot uses the same three steps to identify sounds as listed in the research from Nakadai and Okuno. The Sound Source Localization (SSL) part of the “robot audition” conveys the location and number of sound sources. SSL for robots requires noise robustness, high resolution, and real-time processing to occur in immediately and in noisy environments.

A simulated disaster victim in need of rescue is found among rubble (clay pipe) via audio (the voices and the whistles). Blue circles on the map (top right) indicate the detected sound-source locations. (Courtesy of Tohoku University)

Implementing HARK into drones is the result of extreme audition research. The project is part of a research challenge from the Japanese Cabinet Office initiative ImPACT Tough Robotics Challenge. It is led by Program Manager Satoshi Tadokoro of Tohoku University. The implementation of the HARK software in a drone creates a system that can detect voices, mobile device sounds, and other sounds from disaster victims while filtering out background noise of a drone to assist in faster victim recovery.

The drone system consists of a microphone array that replaces previously installed sensors. By using the microphone array instead of the original sensors, it decreased the drone's weight and increased the high-speed data processing by reducing the computational workload. Due to the elimination of other sensors, less data is transmitted to the base station. The total data-transmission volume was reduced to less than 1/100.

To aid in detection, the software uses a three-dimensional sound-source location estimation technology with display map. Assistant Professor Taro Suzuki of Waseda University provided the high-accuracy point cloud map data, a result of his research on high-performance GPS. This allows the software to create an easily understood visual user interface based on the sound sources. The all-weather-proof microphone array consists of 16 microphones all connected via one cable, creating an easy installation process and ultimately giving the drone ability to perform search and rescue in adverse weather.

Within the first 72 hours after a disaster, the survival probability is drastically reduced for victims. Currently, drones used for search and rescue use cameras and sensors to locate victims. By implementing auditory detection, victims trapped in dark locations or areas with limited visibility have an increased chance of being rescued.

The research group looks to improve on the system by adding functionality for classifying sound-source types. This helps the drone focus on victim source sounds against irrelevant sources. Another goal is to develop the system as a package of intelligent sensors, which can then be connected to other drones with different detection technology, creating an all-encompassing location unit.