The Growth of Artificial Intelligence in IoT and Smart Manufacturing

The Industrial Design & Engineering Show hosted a special panel discussion on artificial intelligence, machine learning, and the engineering industry. With the Internet of Things introducing so many edge collecting devices and the ever-growing world of big data, artificial intelligence looks to be the key in analyzing and providing insight to engineers and operators. Nancy Friedrich, executive director of content for Machine Design and Electronic Design, hosted the panel. The panelists were:

· Vibhu Bhutani, Chief Strategy Officer, Softweb Solutions

· Mark Hitmar, Sr. Industry Marketing Manager, Global Manufacturing Practice, SAS

· Samarjit Das, Senior Research Scientist, Robert Bosch LLC

· Ngai Zhang, Technology and Patent Law Attorney, Pillsbury Winthrop Shaw Pittman

Nancy Friedrich: Since the ID&E Show took place in Cleveland this year, can machine learning predict if the Cavaliers and the Indians can win it all this year?

Mike Hitmar: Well, even though I am now a resident of Raleigh, N.C., I am a native northeast Ohio resident and a Buckeye and lifelong Cleveland fan so I would have to separate the emotion out of it, which is where machine learning could come in. And I think the interesting thing is to use the sports example as a setting for traditional predictive analytics. In the absence of machine learning, predictive analytics would look for prior events to detect an anomaly or possible failures and then predict normal future operating data. This is very much past data-based. Artificial intelligence (AI) has the possibility to analyze the emotional level and spirit of Cleveland fans and consider those for future predictions.

Vibhu Bhutani: So I come from a country where we play cricket a lot and it actually happened there. Last year there was a machining-learning algorithm used for a series. The prediction was if the player scores more than 50 runs in the first 10 overs, then “team A” will win. When they saw the predictions for the whole series, it was correct about 89%. So yes, it is possible.

Nancy Friedrich: Sam, looking at AI, we need to manage our expectations versus our true capabilities. What actually works today and how will AI benefit engineers?

Sam Das: AI has gone through several lifecycles as you pointed out. It started back in 1957 in workshops at Dartmouth College where these leaders of AI like Herbert Simon, and Allen Newell from Carnegie Mellon, and then from MIT, Marvin Minsky and John McCarthy. Those pioneers together built up significant AI capabilities like playing checker games and solving simple logic problems. However, things stalled and AI never reached the benefits of the consumer market or to be an aid to society.

More recently, there’s a lot of hype about of AI and the flagship poster boy is computer vision where you can analyze an image and be able to integrate it to get millions of images through the AI algorithms. So this perception, visual perception and pattern recognition, is one of the aspects of our current artificial intelligence. Think about humans, we are also intelligent beings but perception of visual data or auditory data is just one aspect of our intelligence right. For AI, the things that work today are visual recognition and understanding speech. Examples are software systems like Apple’s Siri and Amazon’s Alexa or Google’s language translation software. So these are the different classes of a class of machine learning called supervised learning algorithm, where people laboriously annotate it with their own intelligence. What works is what is called “supervised learning” problems; where you teach the computer with examples and, assisted by humans, they learn to recognize them. That is what works today. This is a very constrained set of classes, but they have a lot of applications as you see today with cameras being able to recognize objects and smart homes being used by consumers.

At the ID&E Show in Cleveland, Machine Design and Electronic Design hosted a special panel about the growing role of artificial intelligence and machine learning in the engineering industry specifically relating to the internet of things and the world of big data.

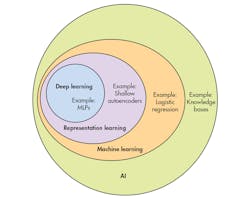

Vibhu Bhutani: I agree. We are currently in an era of “weak AI.” I saw a Venn diagram a few months back that depicts machine learning as an integral part of AI and AI as a whole consists of several components like natural language processing, machine learning, and cognitive intelligence. When we talk about machine learning, it is in reference to this weak AI today, which is involved in day-to-day activities. Electronic systems are designed to do specific work like voice recognition and pattern recognition.

Mike Hitmar: I think we are, it is starting to take off as well in the health care industry. AI is able to look at an image of human cells, detect cancer cells at a much higher success rate than a human can, detecting with more accuracy, and detect the healthy cells, which sometimes are overlooked by human operators. This is improving upon the human error commonly found in test results of X-rays and MRIs.

Nancy Friedrich: Ngai, how do you think the rise of artificial intelligence as a collaborator not just a tool will affect human innovation?

Ngai Zhang: I think it will have a positive effect, which will increase the number of human innovations. There were chess competitions of different levels of AI systems playing against each other. Obviously, the more advanced AI systems won. However, when humans were paired with the less advanced AI systems, they were able to beat the solo advanced AI system as a team. There is an opportunity for collaboration between AI systems and humans, not necessarily replacing humans.

The Venn diagram above comes from the MIT Press book Deep Learning and it helps define how deep learning, machine learning, and representation learning are all apart of artificial intelligence.

Nancy Friedrich: Why is deep learning, which many consider the most promising branch of AI today, so exciting?

Sam Das: Deep learning is a special type of machine-learning algorithm. It is multiple layers of neural networks that mimic the connectivity of the brain and these types of connectivity seem to work much better than pre-existing systems. We currently have to define parameters for machine learning based on our human experience. When we look at images of apple and oranges, we need to define features, so that machine-learning systems can identify the difference. Deep learning is the next level because it can create those distinctions on its own. By just showing sample images of apples and oranges to a deep-learning system, it will create its own rules be realizing that color and geometry are the key features that distinguish which is which, and not have to teach it based off human knowledge.

Vibhu Bhutani: The reason deep learning is the most exciting is because of this world of big data. In the past few years, due to IoT, we have connected several devices and have collected large amounts of data. The next step is to process that data and provide outputs from that data. From an industrial aspect, the software engineer will have to write software to process that data and they will be using deep-learning algorithms to do the processing. Deep learning will be able to not only identify data, but distinguish useful information and rely less on human programming to teach systems what is good and bad data.

Nancy Friedrich: Diving into the moral and legal area of AI, Ngai, if an AI program creates something, who owns the intellectual property?

Ngai Zhang: There was a court case a few years back, where a photographer lost his camera to a group of monkeys. The monkeys took selfies with the camera and the images were extremely popular and viral. People started using the copies and the photographer started to sue over copyright infringement. The court found that copyright rules do not apply to monkeys and since the monkeys took the photos, the photographer had no legal copyright over the photos taken. Only a human being can apply for copyrights and this ruling also applies to patents: only a human being can apply for patents. If I use AI to create an invention, it would depend on the significance of my contribution. For example, if I was the monkey and I was trying to take a selfie but the photographer at that moment took the camera away from me and took a better picture, in that instance the photographer would have sole ownership of the copyright.

Another example, the creator of an AI program would have copyrights of that software code and any other code derived from his program would be subject to the creator’s copyright. Any product created by that AI program, would not fall under copyright law but rather contract law. The product would not belong to the creator and would not belong to anyone until the user files for copyright of his AI assisted invention.

Nancy Friedrich: What are some of the legal problems that AI can pose?

Ngai Zhang: The programming of AI is dependent on the programmer, which could inherit certain human biases. For example, a while back Google had some problems with their search engine providing biased searches. When you typed in the keyword “CEO,” it would provide only images of male CEOs and no images of female CEOs even though at the time female CEOs made up 4% of population. Then if you typed in “MALE CEO,” it would provide no results. The programming of AI must be done carefully as to not inadvertently inherit the biases of people.

Nancy Friedrich: Sam, of course at ID&E, there is a lot of talk of IoT and the engineering industry. How could the IoT itself be an enabler for custom AI solutions from industrial environments to people's homes?

Sam Das: AI data is important, big annotated data, which you can use to provide information to machine learning. IoT really is one big source of data. Whether you are deploying sensors in your home or in a structured environment like a manufacturing plant, you are collecting a lot of useful data. That data is the fuel for AI. Sixty to 80% of the task is done by the data and then the AI algorithm performs the work based off that data. AI can be used to help collect data more efficiently from IoT systems by filtering between useful and excess data.

Nancy Friedrich: Vibhu, can you define smart manufacturing and how AI is a big part of smart manufacturing?

Vibhu Bhutani: Coming from an IoT company, 40 to 50% of our customers work in manufacturing. Our customers will ask us to develop an application for their smart manufacturing solution. However, you cannot just develop an application to solve their problems. In the manufacturing industry, you need to establish the building blocks to create an application upon first. Smart machines are one of the first building blocks needed, they are the endpoint devices needed for the IoT or smart manufacturing industry. You need to have smart machines before even thinking about having a smart manufacturing system. These machines collect and produce data that are need for AI. The next building block is to have a platform that these smart machines can connect with and that enables data collecting into cloud services. Then you need the data analytical platform that understands your data. This platform enables your algorithms to create useful representation of the data collected. These building blocks create a connected manufacturing floor and once in place is when you can use smart manufacturing. Once you have established a smart manufacturing system, this is when you can apply machine learning, deep learning, and AI analytics to provide you with smart computing decision power. This way the decisions are being made via AI systems rather than data being pushed from the cloud; you are now pushing decisions on the system, proactive instead of reactive. This is called edge analytics.

Mike Hitmar: I agree on that definition coming from a data analytics perspective; however, smart manufacturing is also a larger umbrella term that covers several technologies and processes in the assembly plant. The data that is pulled into the cloud that provides the learning and teaching algorithms, you bring that data to the factory floor in the methods of predictive models. Now the factory floor can implement predicative maintenance to help the engineers and operators be more efficient. You bring the computer models closer to where the data is being generated and you reduce the lag time.