IoT Started with a Vending Machine

The internet’s takeover of the global telecommunications landscape occurred within a short window of modern history; it carried 1% of two-way communications in 1993, then 51% in 2000, and more than 97% in 2007.

Key moments in the history of the internet include the establishment of ARPANET (1969), the publishing of TCP/IP standards (1980), the founding of the IANA (1988), and the commercialization of NSFNET (1995). Each of these dates marked real changes for the internet: decisions were made and standards were set.

Unsurprisingly, the Internet of Things (IoT) is at a much different stage of development than its foundational technology. The popular energy surrounding IoT feels anticipatory, as though the biggest changes are yet to come.

While IoT devices already exist around factories, in households, and on wrists worldwide, many researchers and developers foresee even wider adoption of the technology in years to come and a different kind of role than it’s had so far.

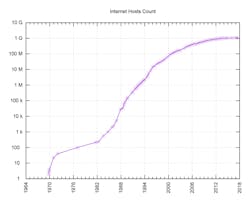

This graphic shows the exponential growth of the number of internet by year. Spikes in growth may be noted after some of the events summarized here. (Graphic: Jim Scarborough)

IoT ill be Wireless

The anticipation of an ever-more-connected world is seen in the new mobile technology standards that are currently under development. Researchers are proposing 5G wireless protocols to succeed 4G/LTE technologies, and many of these proposals integrate standards specifically catered toward low-power wireless technologies.

Imagine devices as small as a pencil connecting to cellular networks. 5G can make this a reality.

All of this means that telecommunications experts from around the world—experts tasked with preparing for technology booms years in advance—are clearing the way for an explosion in wireless IoT technology.

While the future of IoT seems set, its history from the past 20 years reveals just how rapidly the concept has changed and, perhaps, provides a lesson that the IoT we are expecting may be different from what’s actually coming.

The Beginnings of IoT

Cisco Systems estimates that the Internet of Things was not born until sometime between 2008 and 2009, when they say the number of “things or objects” connected to the internet grew to outnumber the world’s human population.

While the technology itself is quite new, the concept itself isn’t much older; the term “Internet of Things” was not coined until 1999 and referred to a system where radio-frequency identification (RFID) was the key technology. Precursors to IoT that resemble today’s notions of the technology date back to as early as the 1970s under the guise of “ubiquitous computing.” However, most histories of IoT begin with a Coke machine at Carnegie Mellon University.

1970: CMU Computer Science’s Coke machine

Wean Hall, shown here on the campus of Carnegie Mellon University, is the location one of the first known “connected” devices: a Coke machine. (Photo: Jared Luxenberg)

In 1970, students and faculty working and studying in Wean Hall on the CMU campus were consuming 120 bottles of Coca-Cola products each day. Because of this high consumption rate, customers would frequently find themselves standing in front of an empty Coke machine after a jaunt up or down a flight of stairs, which they found unacceptable.

To save some trouble, members of the CMU Computer Science Department (who, by virtue of lacking documentation, are today unknown) created microswitches to put inside the Coke machine to sense how many bottles were available. The software they created for the departmental computer also tracked how recently the bottles were loaded, so students and faculty could ping the computer for information about the availability of soft drinks.

Because CMU was part of ARPANET at the time, other universities across the country could also access information about the Coke machine on the third floor of Wean Hall using just one command: finger coke@cmua.

By virtue of the connected nature of this vending machine and the nature of the service it provided, it is considered an early example of an IoT device.

1991: Mark Weiser on computing becoming part of the fabric of everyday life

In his article titled “The Computer for the 21st Century,” Mark Weiser, a chief scientist at Xerox’s Palo Alto Research Center (PARC), wrote about “ubiquitous computing,” a term he coined possibly as early as 1988. In his article, Weiser characterizes ubiquitous computing as a world of devices enabled by computational power and internet connectivity—a concept closely akin to what is now referred to as the Internet of Things.

The Nest Learning Thermostat, shown here, uses temperature data, forecast data, location data provided from the owner’s phone, and other sensors to create energy-saving heating and cooling schedules.

The article reads today as an accurate prophecy of how IoT would look 30 years later, written at a time when the term “Internet of Things” was not even coined. For example, the three technologies Weiser deemed requisite to “ubiquitous computing” becoming a reality are “cheap, low-power computers that include equally convenient displays, a network that ties them all together, and software systems implementing ubiquitous applications.”

Computer technology, of course, was in a very different place at the time of his writing. A few sentences later in the article, he added, “Flat-panel displays containing 640 × 480 black-and-white pixels are now common.” The internet was also in its early stages of adoption at the time. Eight years later, the now-common term to describe this vision was first used.

1999: Kevin Ashton presents the “Internet of Things” to Proctor and Gamble executives

The term “Internet of Things” dates back to 1999, coined during a presentation to executives at Proctor & Gamble, the multinational corporation focused on cleaning agents and personal care products. Kevin Ashton was the man giving the presentation. He was the founder of MIT’s Auto-ID Center, which used RFID technology to globally track goods.

Ashton put a lot of stock in RFID at the time, and even as late as 2009 was writing about the promises the technology offered. However, IoT seems to be turning away from RFID technology, foregoing RFID-tagged objects in favor of objects that are computers unto themselves.

However, some historical context may explain why RFID remained the best idea for so long on how to implement an “Internet of Things.” In 1999, cellular device networks were not using Internet Protocol (IP) configurations as universally as they are today. Internet-enabled cellular devices were far from the commonality that they are now.

In addition, the IPv4 standard that was in effect at the time would not have been able to accommodate the number of devices that an Internet of Things would bring. IPv6, which became the standard years later, was pivotal for IoT because it expanded the IP address space enormously, achieving a size that far exceeds even the number of grains of sand on earth.

What’s Next?

Decisions that will enable the growth and adoption of IoT technologies are still being made. 5G protocols, which experts believe will be necessary to facilitate more widespread IoT adoption, will not be finalized until the end of 2018, and worldwide commercial launch is not expected until 2020. Additionally, IPv6 only became an official standard in 2017.

While there is a long way to go for IoT technology, 50 years of progress shows just how far it has come. The vision of a connected world has expanded from individually labeled devices to individually powered devices. IoT devices will not only have identifiers, but computational abilities also.

Perhaps Mark Weiser’s idea of “ubiquitous computing” wasn’t so far off.

Daniel Browning is the business development coordinator at DO Supply Inc. In his spare time, he writes articles about automaton, AI, VR, and the IoT.

About the Author

Daniel Browning

Business Development Coordinator

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: