Innovative Camera Can See Moving Objects Around Corners

Engineers at Stanford University have been upgrading a camera they developed with the ability to “see” around corners. “People talked about building a camera that can see as well as humans for applications such as autonomous cars and robots, but we wanted to build cameras that go well beyond that,” says Gordon Wetzstein, an assistant professor of electrical engineering at Stanford. “We wanted to see things in 3D, around corners, and beyond the visible light spectrum.”

The updated camera they came up with captures more light from a greater variety of surfaces, sees wider and farther away, and is fast enough to monitor out-of-sight movements. But one of the goals is still to help autonomous cars and robots operate even more safely than they would with human guidance.

Keeping the camera practical is a high priority for the researchers. The hardware used, the scanning and image processing speeds, and the style of imaging are already common in autonomous car vision devices. The previous camera’s systems for viewing scenes outside its line of sight relied on objects that either reflect light evenly or strongly. But real-world objects (including shiny cars) fall outside these categories, so the new camera can handle light bouncing off a range of surfaces, including disco balls, books, and intricately textured statues.

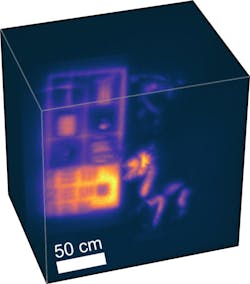

Objects—including books, a stuffed animal, and a disco ball—in and around a bookshelf tested the system’s versatility in capturing light from different surfaces in a large-scale scene. (Courtesy: David Lindell)

Central to the camera upgrade is a laser 10,000 times more powerful than what the engineers were using a year ago. The laser hits a wall opposite the scene of interest, the light bounces off the wall to hit objects in the scene, and then bounces back off the wall to the camera sensors. By the time the laser light gets back to the camera, only specks remain, but the sensor captures them all. They are processed through a highly efficient algorithm, also developed by the team, that untangles these echoes of light to decipher the hidden image.

The camera scans at up to four frames per second and reconstructs scenes at up to speeds of 60 frames per second on a computer with a graphics processing unit, which improves the overall graphics-processing capabilities.

To advance its algorithm, the team looked to other fields for inspiration. The researchers were particularly drawn to seismic imaging devices which bounce sound waves off underground layers in the Earth to uncover what’s beneath the surface. The researchers then modified their algorithm to likewise interpret bouncing light as waves emanating from hidden objects. The result was the same high-speed and low-memory requirements with improvements in the ability to see large scenes of various materials and objects.

By analyzing single particles of light, this new camera reconstructs room-sized scenes and moving objects hidden around a corner. This could someday help autonomous cars and robots see better.

Being able to see real-time movement from otherwise invisible light bounced around a corner was a thrilling moment for this team, but a practical system for autonomous cars or robots will require further enhancements.

“The images still looks low-resolution and it’s not super-fast, but compared to the state-of-the-art last year it is a significant improvement,” says Wetzstein. “We were blown away the first time we saw these results because we’ve captured data that nobody’s seen before.”

The team hopes to move toward testing the camera on autonomous research cars, while looking into other possible applications, such as medical imaging that can see through tissues. Among other improvements to speed and resolution, the engineers will also work at making their camera able to handle challenging visual conditions drivers encounter, such as fog, rain, sandstorms, and snow.

About the Author

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: