Mining for Answers: Tapping into the Product Data with Software & Systems

Comparatively speaking, the century following the Industrial Revolution saw the process to develop and manufacture products remain relatively unchanged, with long stretches between incremental advancements. Without the tools to obtain, interpret and apply meaningful feedback, product development was largely guided by observations, trial-and-error, and experience. Consequently, progress was severely limited.

This all began to change with the introduction of digital applications in the latter half of the 20th Century. Throughout the decades that followed, increasingly maturing software systems would allow organizations to capture and leverage previously untapped data embedded in products. Software was becoming the catalyst to ignite innovation, drive quality, enhance equipment effectiveness, provide insight and inspire next-generation products.

Today sophisticated applications continue to advance rapidly to keep pace with complex and evolving products and processes. The examples below show how software and systems translate raw metrics into insights and actions throughout the product lifecycle.

Upstream Product Design

Until about 60 years ago, introducing a new product or design change was based largely on paper drawings and an extended series of physical prototypes. This build-it, break-it process was time-consuming, costly and did not easily lend itself to innovation.

Today products are represented with CAD models and their performance is checked by recreating real-world conditions virtually. The process is known as engineering analysis or simulation, and is supported by a long list of sophisticated software applications (such as Nastran, Ansys and LS-DYNA).

These applications allow analysts to input loads and calculate the stresses and strains to validate the structural integrity of that design. Because it’s important that these inputs mirror conditions consistent with the real-world operation of the product, the inputs must be rooted in the physics. Baseline data points are often obtained by monitoring similar products in use. The inputs typically simulate the normal operation of the product along with extreme conditions in seismic, military, marine and off-road applications. Analysis also provides guidance for validating the final product and helping locate strain gauges in areas of potential concern.

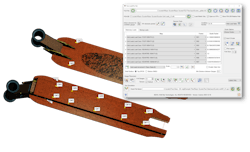

Inputting accurate loads is paramount. And there are software applications that ensure that this is the case. One such program is True-Load from Wolf Star Technologies. The software guides the laying of strain gauges on equipment in critical locations that can be used to back calculate operating loads. This data is used to calculate the operating loads on the structure. From this, automatic strain correlation reports are generated showing cross plots of simulated strain plotted against measured strain. Users typically see strain correlation between 5% and 2% on their FEA models using the loads calculated by the program.

Wolf Star’s president and founder, Dr. Tim Hunter, explains the process: “The technique begins with the engineer setting up unit loads on an FEA model. The software analyzes these results and suggests optimal strain gauge placement. A correlation matrix relating strain response to the unit loads is stored to disk. The analyst works with the test engineer to lay the gauges at the indicated locations.”

Once the strains are measured in the lab, proving ground or field, the loads are calculated by multiplying the strains by the correlation matrix. According to Hunter, this provides strain-correlated loading the analyst can use to query responses anywhere on the structure and even generate operating deflection shapes. “In addition, these loads are ideal for performing FEA based durability analysis,” Hunter said.

Capturing accurate data removes the guesswork from analysis. Users typically eliminate one or more design-build-test cycles from the product development process. This saves hundreds of thousands of dollars and accelerates time to market.

Product Quality

During manufacturing, testing is done to ensure quality and that the part conforms to industry and customer standards. This could include part defects or subsystem issues (such as unacceptable noise or vibration levels) that fail to meet a predetermined criterion.

Catching defects before the product leaves the manufacturing floor reduces manufacturing costs and warranty claims while keeping customers satisfied. At one time, and still in some cases, quality was determined physically (that is, by listening or visual inspection) to detect objectional noises such as motor noise, brake squeals, belt noise and rattles. Today, manufacturers are replacing highly subjective and error-prone processes with objective physics-based quality inspection. These systems employ industry standards, test data and jury evaluations as a baseline for determining if a part or assembly passes quality inspection.

But how do these systems translate subjective terms such as quality into objective PASS/FAIL determination? In the case of noise, the process often begins with a jury evaluation method to determine how people hear sounds. Groups of people rate different sounds using a statistical method—that is, they identify noise that is deemed to be objectionable. That data is then analyzed using psychoacoustics to understand how people perceive sounds and determine an acceptable noise range.

Using the jury results, metrics are modeled to score the performance of a product that is being manufactured. At the end of production, products are checked by quality inspection systems and assigned a PASS/FAIL rating. Listen to this podcast to learn how a leading automotive supplier employs the process:

The process has roots in the 1960s, where it was instrumental in the development of rockets and later space shuttle programs. Listen to this podcast to learn more:

Signalysis is a leading provider of such systems. According to the company’s president and founder, Neil Coleman, the key is to systematically measure and interpret signals originating from the product or system.

“Suppliers come to us to help ensure quality,” he said. “In the case of noise inspection, we help by selecting the right measurement sensor, whether it be an accelerometer, vibrometer or microphone. Once these measurements are obtained, the data is analyzed with complex algorithms such as frequency domain, time domain analysis, order domain and so on. This helps us to create a metric that we can scale and linearize so that limits can be adjusted to what is acceptable to the customer.”

All of this is pulled together in a software-driven quality inspection system that allows manufacturers to quickly and accurately determine if a motor or gearbox passes the quality criteria and meets the standards of the industry and customer.

Troubleshooting Equipment Failure

Despite the best maintenance steps, all equipment will likely fail at some point. Test systems help to identify the location and extent of problems that exist so that decisions regarding corrective action or replacement can be made.

From CNC equipment to robotics, today’s manufacturing floors are populated with equipment driven by servo motors. And when a servo isn’t working, production is severely impacted.

Mitchell Electronics, Inc. provides test systems to identify servo motor errors. When there is a problem with the machine, the system pulls data to determine whether the servo motor or encoder may be part of the problem. Repair shops and manufacturers often use the system as a triage step to ensure that repair is focused in the right area. Similarly, the system is also used to test and to realign the encoder on the motor after the repair.

The software interprets the signals from the encoder and turns them into human readable data, such as a count value. Turning the encoder allows the user to determine if it is counting properly. The software displays these counts as a mechanical angle, which is a logical method for understanding the position of the encoder shaft as it turns.

The software allows users to measure how many magnetic poles a servo motor has. This number of poles can then be input into the software, which will display an electrical angle. This simplifies the alignment process in a way that makes it more repeatable and more consistent across various manufacturers and models of motors and encoders.

Watch this video to learn how the electrical angle is correctly shown after selecting the poles:

Maximizing Production

Manufacturers invest heavily in production equipment, and they expect that output will be reflected in those investments. When this isn’t the case, they want to know why. Is the problem related to the operator, equipment, the process, all the above or none of the above? Today’s smart factories contain a wealth of information; one just needs to know how and where to find it.

An innovative cloud-based application from Worximity delivers real-time, actionable insights directly from equipment on the production floor. This advanced software specializes in enhancing Overall Equipment Effectiveness (OEE) by meticulously analyzing three key aspects of production equipment: availability, performance and quality. It adeptly calculates these critical metrics using real-time data harvested from a diverse range of machinery, including both legacy systems and modern equipment. This data is gathered through an array of methods such as advanced sensors, direct PLC connections and seamless integration with various back-office systems via sophisticated APIs.

Moreover, the solution excels in tracking and interpreting downtime occurrences, shedding light on the frequency and duration of production interruptions. This crucial analysis offers deep insights into persistent challenges that impact production efficiency, translating into measurable performance deficits.

Harnessing this data empowers manufacturers to make informed, data-driven decisions. It enables them to pinpoint improvement opportunities and devise specific strategies aimed at boosting operational efficiency. This approach not only enhances current productivity but also fosters a culture of continuous improvement, crucial for staying competitive in today's manufacturing landscape.

Putting Data to Work

Machinery, equipment and other products offer all the information necessary to maximize their effectiveness, detect flaws, correct problems and create better versions. Prior to the advent of software and systems accessing, interpreting and applying that information was time-consuming, costly and largely subjective.

Thanks to robust software applications and the systems they fuel, we have the means to capture and translate raw data into meaningful insight and unprecedented levels of quality, innovation and performance.

Robert Farrell is the owner of Farrell MarCom, LLC and co-founder of Revolution in Simulation.

About the Author

Robert Farrell

President of Farrell MarCom, LLC, and Co-Founder of Revolution in Simulation.Org

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: