Machine Learning “Fixes” 3D-Printed Metal Parts—Before They’re Built

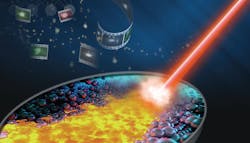

For years, engineers at Lawrence Livermore National Laboratory have used sensors and imaging techniques to analyze the physics and processes behind metal 3D printing in order to build high-quality metal parts the first time, every time. Now, they are leveraging machine learning to process data obtained during 3D builds in real time, detecting within milliseconds whether a build will be high quality. More precisely, they are developing convolutional neural networks (CNNs), a type of algorithm commonly used to process images and videos, to predict whether a part will be good by looking at as little as 10 milliseconds of video.

Until now, analysis of sensor data taken while 3D printing a metal parts was done after the part was finished and it was expensive. And part quality could only be determined long after, explains principal investigator and LLNL researcher Brian Giera. With parts that take days to weeks to print, CNNs help engineers better understanding the printing process and let them correct or adjust the process in real time if necessary.

LLNL researchers developed the neural networks using about 2,000 video clips of melted laser tracks under varying conditions, such as speed or power. They scanned part surfaces with a tool that generated 3D height maps, using that information to train the algorithms to analyze sections of video frames (each section called a convolution). The process is too difficult and time-consuming for humans, according to Giera.

The algorithms that label the height maps of each build then use the same model to predict the build track’s width and standard deviation. As well as whether the track was broken was developed by LLNL researcher Bodi Yuan. Using the algorithms, researchers could video parts being printed and determine if it would have acceptable quality. The neural networks detected whether parts would be continuous with 93% accuracy.

Some researchers at LLNL had spent years collecting various forms of real-time data on the laser powder-bed fusion metal 3D-printing process, including video, optical tomography, and acoustic data. While working with that group to analyze the data, Giera concluded it wouldn’t be possible to do all the data analysis manually and wanted to see if neural networks could simplify the task.

“We were collecting video anyway, so we just connected the dots,” Giera said. “Just like the human brain uses vision and other senses to navigate the world, machine-learning algorithms use all that sensor data to navigate the 3D printing process.”

The neural networks they developed could theoretically be used in other 3D printing systems, Giera said. Other researchers should be able to follow the same formula, creating parts under different conditions, collecting video, and scanning them with a height map to generate information that could be used with standard machine-learning techniques.

Giera said work still needs to be done to detect voids within parts that can’t be predicted with height map scans, but could be measured using ex situ X-ray radiography. Researchers will also try to create algorithms to incorporate other types of sensors besides video.