As artificial intelligence systems learn to better recognize and classify images, they are becoming highly reliable at scanning medical images and diagnosing diseases, such as skin cancers. But as good as they are at detecting patterns, AI won’t be replacing your doctor any time soon.

Even when used as a tool, image recognition systems still require an expert to label the data, and a lot of data at that: It needs images of both healthy and sick patients. The algorithm finds patterns in the training data and uses it when it tries to identify new images. But it is time-consuming and costly for experts to obtain and label each image.

To address this issue, an research team at Carnegie Mellon University’s College of Engineering developed an active learning technique that uses limited data to achieve a high degree of accuracy in diagnosing diseases such as diabetic retinopathy or skin cancer.

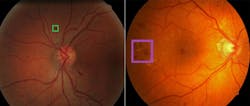

To the left is a retina containing a lesion, known as an exudate (inside the box), associated with diabetic retinopathy. To the right is a retina containing a lesion known as a hemorrhage, which is also associated with diabetic retinopathy.

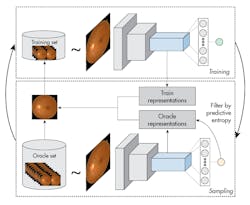

The researchers’ model begins working with a set of unlabeled images. The model decides how many images to label to have an accurate set of training data. It labels an initial set of random data and then plots it over a distribution, as images will vary by age, gender, physical property, and other parameters. To make good decisions based on this data, samples must cover a large distribution space. The algorithm then decides what data should be added to the dataset, considering the current data distribution.

The algorithm measures how good the distribution is after a set of new data is added to it, then selects a new dataset that improves the overall dataset.

The process is repeated until the dataset’s distribution is good enough to be used as the training set. This method, called MedAL (for medical active learning), was 80% accurate at detecting diabetic retinopathy using only 425 labeled images—a 32% reduction in the number of required labeled examples compared to the standard uncertainty sampling technique, and a 40% percent reduction compared to random sampling.

The process starts by training a model and using it to query examples from an unlabeled data set that are then added to the training set. A new query function is proposed that is better suited for Deep Learning models. The model is used to extract features from both the oracle and training set examples, and the algorithm filters out oracle examples that have low predictive entropy. Finally, the oracle example is selected that is on average the most distant in feature space to all training examples.

The researchers also tested the model on other diseases, including skin cancer and breast cancer images, to show it would work on a variety of different medical images. The method is generalizable, since its focus is on how to use data strategically rather than trying to find a specific pattern or feature for a disease. It could also be applied to other problems that use deep learning but have data constraints.

CMU’s active learning approach combines predictive entropy-based uncertainty sampling and a distance function on a learned-feature space to improve the selection of unlabeled samples. The method also overcomes the limits of traditional approaches by efficiently selecting only images that provide the most information about the overall data distribution, thus reducing computation cost and increasing speed and accuracy.