Teaching Computers to Have Human-Like Perception

The human brain has the ability to perceive objects in 3D space. Not only does it recognize object characteristics like shape, form, density, and weight, the brain also perceives how to interact with that object, to manipulate the object in order to move it or perform work. Researchers at MIT recently presented four papers on fundamental cognitive abilities that a computer or robot would need to navigate the world and identify the distinct objects that inhabit said world.

Josh Tenenbaum is a professor of brain and cognitive sciences at MIT. He directs research on the development of intelligence at the Center for Brains, Minds, and Machines. It is a multiuniversity, multidisciplinary project that looks to explain and also replicate human intelligence. Along with student Jiajun Wu, they have authored four papers that examine human intelligence and how computer systems can start to analyze and replicate human thought.

Being able to recreate human thought processes in machines can lead to advances in robotics and machine vision. “The common theme here is really learning to perceive physics,” says Tenenbaum. “That starts with seeing the full 3D shapes of objects, and multiple objects in a scene, along with their physical properties, like mass and friction, then reasoning about how these objects will move over time. Jiajun’s four papers address this whole space. Taken together, we’re starting to be able to build machines that capture more and more of people’s basic understanding of the physical world.”

The Center for Brains, Minds and Machines (CBMM) is a multi-institutional NSF Science and Technology Center dedicated to the study of intelligence—how the brain produces intelligent behavior and how we may be able to replicate intelligence in machines.

Machine Learning Like a Human

A common thread running through all of the papers is their approach to machine learning. With a typical machine-learning system, human analysts teach computers by labeling them. The system attempts to learn what features of the data corresponds with the labels, and the human operators rate the responses. In the papers from Tenenbaum and Wu, the system is trained to infer physical models. The 3D objects are hidden from view and the system works backwards, using the model to resynthesize the input data. Then human operators rate how well the system reconstructs the data to match the original.

The system uses visual images to build a 3D model of an object. Most systems strip away any occluded objects; filter out visual textures, reflections, and shadows that confuse the data; and infer shapes of unseen surfaces. The difference of the system proposed by Tenenbaum and Wu is that it rotates the 3D model in space and adds visual textures back in to approximate the input data.

The system requires uploaded data that includes both visual images and 3D models of the objects depicted in the images. The process of constructing accurate 3D models of the objects depicted in real photographs is a time-consuming endeavor. Therefore, the researchers initially train their system using synthetic data—the visual image is generated from the 3D model, rather than vice versa. Once trained on synthetic data, the system can be fine-tuned using real data.

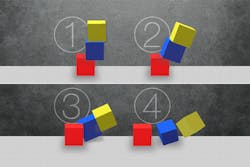

As part of an investigation into the nature of human physical intuitions, MIT researchers trained a neural network to predict how unstably stacked blocks would respond to the force of gravity.

Modeling Intuitiveness

The fourth paper of Tenenbaum and Wu, along with William Freeman, the Perkins Professor of Electrical Engineering and Computer Science, and colleagues at DeepMind and Oxford University, proposes a computer recognition system that models a human’s intuitive understanding of the physical forces acting on objects in the world. This paper assumes that the system has already deduced objects’ 3D shapes; it proposes the system had already learned the shapes of a ball and cube.

The system is trained to perform two tasks. The first is to estimate the velocities of balls traveling on a billiard table and predict how they will behave after a collision. The second is to analyze a static image of stacked cubes and determine whether they will fall and where the cubes will land if they do.

Wu created the representational language he calls Scene XML. It can quantitatively characterize the relative positions of objects in a visual scene. The input data is learned by the system in a specific language and then feeds that description into the physics engine.

Physics engines are a staple of both computer animation—where they generate the movement of clothing, falling objects, and the like—and of scientific computing, where they’re used for large-scale physical simulations. It models the physical forces acting on the represented objects and predicts the motions of the balls and boxes. The information is then animated via the graphics engine and the output is compared with the source images. The researchers train their system on synthetic data before refining it with real data.

"The key insight behind their work is utilizing forward physical tools—a renderer, a simulation engine, trained models, sometimes—to train generative models," says Joseph Lim, an assistant professor of computer science at the University of Southern California. "This simple yet elegant idea combined with recent state-of-the-art deep-learning techniques showed great results on multiple tasks related to interpreting the physical world."