Layer-Driven Valve Design Enables Production of Soft-Robotics at Scale

Design engineers in most industries are looking for smart, programmable materials. But finding solutions that are scalable and adaptive to different environments is always a challenge.

The challenges include the fact that the technology that makes the material reactive needs to incorporate robotics and the constituent mechanical components, such as actuators, need to be assembled individually.

The research group at Carnegie Mellon’s Human-Computer Interaction Institute is developing physical, shape-changing surfaces that can be used for tangible interfaces, tactile displays and interactive environments.

They looked to emerging trends in mechanical engineering—such as the shift from using multi-piece mechanisms to the use of composites—to develop an alternate framework, called layer-driven design. In a recently published paper, the authors describe how layer-driven design is used to build programmable pneumatic surfaces.

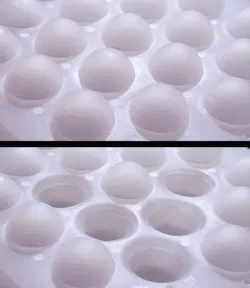

By harnessing a stacked configuration of bulk-fabricated layers, the researchers were able to sidestep multiple manufacturing steps that would be repeated for each of the many actuators that compose the surface of their component.

Instead of looking at actuators as separate modules that need to be linked together, they developed a method for fabricating a stack of layers all at one. The layers are bulk-fabricated through laser cutting, photo-etching, 3D-printing and robotic placement. The result is a unique fabrication method that enables the production of dynamic, discretely-actuated surfaces at multiple scales.

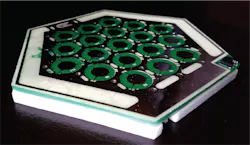

The Carnegie Mellon researchers introduce an example of a composite material (referred to as Stoma-Board) where an array of electrostatic valves drive the transducers. These valves communicate digitally with adjacent valves and regulate the flow of air between pneumatic transducer layer and a global reservoir. The layered configuration can support tactile patterns, soft robotic joints and pneumatic mechanisms, they noted.

Generally, there are two categories of programmable surfaces: dynamic matter, referring to a collection of actuators that can be randomly configured, and morphing materials, referring to shape-changing capabilities programmed into the fabrication process. For the layer-driven system, however, the researchers used a collection of actuators that exploit composite material fabrication techniques associated with morphing materials.

“Usually, these programmable surfaces are incredibly complex (requiring dozens of mechanical pieces), but we’ve come up with a new, inexpensive way to build them,” said Jesse T. Gonzalez, co-author and an HCI researcher at Carnegie Mellon. Functionality arises from a stacked configuration of bulk-fabricated layers, rather than the assembly of many individual parts.

The basic principle behind operating the valve works as follows: When a large voltage is applied, the valve diaphragm clings to the top of the cell, and allows air to pass through. When the voltage is removed, the diaphragm covers the valve outlet, and blocks air flow.

“The interactive surfaces are lightweight, fully programmable and easily scalable to large sizes (such as tables and walls),” said Gonzalez. “We can use them to build refreshable Braille displays, dynamic textures and soft robots.”

The paper describes in detail how to successfully construct an ultra-flat electrostatic valve. The trick, said Gonzalez, is to construct these surfaces from stackable layers (“like a sandwich”).

Designing with layers presents different challenges than designing with modules but the results are scalable. The layer-driven programmable surfaces are the building blocks for subsequent research, pointed out Gonzalez and co-author Scott E. Hudson, a professor at the Human-Computer Interaction Institute.

The researchers noted the technique can be industrialized through integration with the mature and automated manufacturing and electronics processes.

About the Author

Rehana Begg

Editor-in-Chief, Machine Design

As Machine Design’s content lead, Rehana Begg is tasked with elevating the voice of the design and multi-disciplinary engineer in the face of digital transformation and engineering innovation. Begg has more than 24 years of editorial experience and has spent the past decade in the trenches of industrial manufacturing, focusing on new technologies, manufacturing innovation and business. Her B2B career has taken her from corporate boardrooms to plant floors and underground mining stopes, covering everything from automation & IIoT, robotics, mechanical design and additive manufacturing to plant operations, maintenance, reliability and continuous improvement. Begg holds an MBA, a Master of Journalism degree, and a BA (Hons.) in Political Science. She is committed to lifelong learning and feeds her passion for innovation in publishing, transparent science and clear communication by attending relevant conferences and seminars/workshops.

Follow Rehana Begg via the following social media handles:

X: @rehanabegg

LinkedIn: @rehanabegg and @MachineDesign