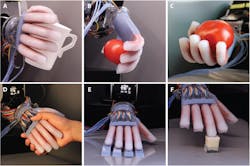

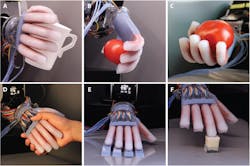

Researchers at Cornell University designed a flexible optic waveguide that serves as a force and strain sensor in a soft robotic hand. The hand can quickly and accurately detect textures, alter its force on delicate objects, and continuously track its path of motion. One of the many demonstrations of the robot's performance was to pick the ripest tomato from a bunch of 3.

The researchers, who published their work in the journal Science Robotics, said that robots can use haptics – or more simply a sense of touch – to change how they move based on external stimuli and their surroundings. That versatility could be especially useful in industrial robots to perform pick-and-place operations in non-uniform or constantly changing environments. It could also help create more delicate prostheses and neuroprostheses, which communicate with the brain serving as the central control unit. (Read more at the end of this report.)

The researchers at Cornell integrated their haptic sensors into the soft robot, which uses pneumatic actuation to mimic the fluid motions of a human hand. The sensors were integrated into the top and bottom of each finger to sense bending, and into the fingertips to sense forces.

How the sensors work:

Cornell's optoelectronic sensors consist of an LED on the end of a stretchable fiber optic wire. These fibers contain a core and outer sheath, but are 3D printed for easier manufacturing. The fibers act as waveguides, in that their structure only allows a certain wavelength of light to propagate through them. The waveguides have a purposefully lossy design, so that when they stretch, their structure changes to allow more light to escape through the outer sheath and only a certain wavelength to propagate.

The continuously changing wavelength during bending is read by a photodetector at the end of the fiber, and compared to a baseline signal to calculate the degree of movement. A similar effect occurs at the finger tips, where the sensors change structure as more pressure is put on an object. If the sensors detect a lower force on the object as their grip increases, they will hold back on squeezing to avoid squishing the object.

The hand could use the sensors to pick out different textures on the microscopic level. (Humans can feel texture at the nanoscopic level.) See the video below to see a demonstration of the Cornell University robotic hand.

More Advances in Prosthetics

Advances in neuroscience are leading to prostheses that respond to voluntary motion commands from the amputee's brain. Communication from the brain to the prosthesis is enabled through wiring to the nervous system in the upper arm. Sending voluntary commands requires training by the amputee. Here, Les Baugh controls two prosthetic arms using the voluntary-control centers of his brain. In 2015, he was part of the DARPA experiment to become the first bilateral amputee to train for control of two prosthetic arms. Research continues to send haptic feedback through the nervous system so it can be detected by the brain, which will then alter its commands to meet the needs of the physical environment.

About the Author

Leah Scully

Associate Content Producer

Leah Scully is a graduate of The College of New Jersey. She has a BS degree in Biomedical Engineering with a mechanical specialization. Leah is responsible for Machine Design’s news items that cover industry trends, research, and applied science and engineering, along with product galleries. Visit her on Facebook, or view her profile on LinkedIn.