Voice Command: Multilingual Speech Recognition for the Shop Floor

Siri can tell you when to take your medication and Google can play your favorite tunes. All you need to do is give the voice command.

If voice assistants are transforming the way we live, it surely makes sense to get them to help out at work, too. Why wouldn’t we give machine operators or machinists a hands-free option when operating heavy machinery?

Voice-recognition human-to-machine technology has already proven it can deliver significant benefits. As the technology continues to evolve, and as long as data is readily available, the possibilities become easier to integrate across the shop floor.

From automatic speech recognition networks that rely on cloud-based API to deep neural networks that provide advanced algorithms to process spoken language, speech recognition advancements demonstrate progress that ultimately leads to efficiencies and cost savings.

Here’s one example: Fluent.ai is an embedded voice recognition solutions company that brings voice automation to factories. An operator uses a headset connected to an embedded voice recognition system to trigger the appropriate movement of the equipment on the assembly line. The voice command eliminates the task of pressing physical buttons at the workstation (which can take up to four seconds per workplace, according to the company).

This is Industry 4.0 in action—digitally connecting the shop floor to practical opportunities for efficiency.

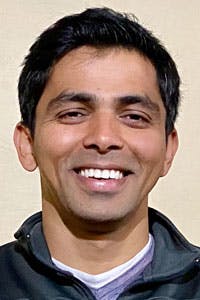

Vikrant Tomar, founder and CTO of Fluent.ai, launched the company in 2015. His Ph.D. in automatic speech recognition is from McGill University, Canada, where he worked on manifold learning and deep learning approaches for acoustic modeling.

In the following interview with Machine Design, the Montreal-based scientist discusses his company’s mission “to voice-enable the world’s devices, solving the barriers to global adoption of voice user interfaces by allowing everyone to communicate with their devices naturally in any language, accent or noise-level setting.”

Machine Design: What problem does speech recognition technology solve for factory operators?

Vikrant Tomar: The two main advantages to implementing speech recognition in factories are increased production efficiency and improved workplace ergonomics. Factory operators are often dealing with heavy objects and machinery. Currently, they must press physical buttons fixed at specific locations to move or manipulate these objects. Physically reaching and pressing the buttons is slow, and the repetitive actions can also have an ergonomically negative impact on workers’ health. It is much more convenient for the workers to execute the appropriate movements just by using their voice.

MD: How does speech recognition work?

VT: Speech recognition refers to the process of capturing human speech and extracting meaningful and actionable information from it. There are currently two main approaches to speech recognition. The conventional method is a two-step approach that relies on cloud processing. Once a command is spoken, that audio is sent to the cloud, where it is transcribed to text. At this point in the process, Natural Language Understanding (NLU) is applied to the text and that triggers an action from the device.

At Fluent, we have cut this process down to a one-step, speech-to-intent based solution. Our Edge AI technology does not depend on internet/cloud-access and is therefore private-by-design. Our technology understands the intent behind a voice command by analyzing the acoustics of the speech on device. No data is sent to the cloud nor stored on device.

MD: What exactly distinguishes Fluent.ai’s technology (speech-to-intent approach) from speech to text?

VT: Fluent’s speech-to-intent technology is inspired by how humans learn to speak and understand speech ourselves. There is no text transcription involved; rather, intent is directly extracted from the speech. As a result, our systems are lightweight, meaning they use considerably fewer computational resources to extract the information related to a user’s desired action. This allows us to create unique solutions that run on very low power processors while processing all the user’s speech offline, on the device, without having to send any data to the cloud.

Offline processing is very important for industrial settings because of privacy and confidentiality concerns. Conventional systems, such as Google Assistant and Alexa, send all the audio data to the cloud for processing, which puts the data at greater risk of hacks or leaks. For example, we have built a speech recognition system that supports 50 commands in both English and Mandarin and uses only 100 kB of RAM and 58 MHz of CPU processing.

Our systems also provide high recognition accuracy and robustness to noise and other background interferences. Robustness to noise and accuracy across accents are also key Fluent.ai differentiators for factory settings where there is heavy background noise and a diverse factory operator population.

MD: What kinds of efficiencies has this technology introduced at the shop floor/operations level?

VT: Depending on the workflow of a factory, the efficiency gains using voice control can be significant. For instance, early results from Fluent.ai’s partnership with BSH Home Appliances Group, Europe’s largest manufacturer of connected home appliances, shows major improvements to factory worker productivity, with the potential for 75-100 % efficiency gains on the assembly line. The initial implementation of the solution cut down the transition time from four seconds to one-and-a-half. In the long run, the production time savings will be invaluable.

MD: What are the main design and technology challenges with the use of speech-to-intent AI technology?

VT: One of the main challenges with developing speech AI models is data collection. All machine learning models require data to learn. The voice commands, for example, can be very specific to a particular factory. That might require us to gather specific audio data. Another challenge specific to factory floors is the diversity of languages and accents in a factory worker population. Workers often come from varied backgrounds, and creating models that can accurately understand many different accents can be challenging.

Background noise is a third main challenge; all speech recognition models suffer in the presence of background noise. This becomes even more important in a factory setting where noise environments can be especially loud and challenging. Finally, the design, choice and positioning of the speech recognition hardware—such as microphone, compute units, etc. —are also important. It needs to fit seamlessly in the workers’ existing environment.

Some of the key advantages with Fluent.ai’s unique technology approach are that we’ve been able to develop speech recognition solutions for our customers that perform at high accuracy across a vast range of different accents and noise environments including factories. We continue to innovate in order to offer solutions that further mitigate the challenges of accents and noise.

MD: This technology is relatively new in the manufacturing/industrial space. How is speech-recognition and voice command being developed or adapted to cope with the industrial environment?

VT: As explained in the preceding question, industrial environments provide some unique challenges for speech recognition. When it comes to factories, there is no one-size-fits-all solution. No two factories are identical. For the speech recognition systems to be efficient, we need to work closely with our partners managing the factory; they are the experts.

Most importantly, we need to look at the whole solution and not just the speech recognition model. This includes the choice and set up of the microphones, how the speech recognition system is accessed, the specific commands required for their work environment, the specific noise conditions and even how many operators will be working on the same floor in proximity.

At Fluent.ai, we have spent a tremendous amount of effort in developing unique AI models that make our speech recognition systems inherently more robust to acoustic variations such as accents and background noise. Furthermore, working closely with our partners, we develop speech recognition solutions tailored to their specific industrial environments. As a result, our solutions maintain industry performance standards for voice recognition accuracy and provide very low latency.

MD: What kinds of protocols are in place to ensure safety and security?

VT: Safety and security are of the utmost importance in industrial settings, especially when dealing with heavy machineries. Each Fluent.ai system is rigorously tested both in-house and in the real-world manufacturing environment in which it’ll be deployed to ensure it meets the defined standards for performance accuracy and reliability.

User training and support are also a key part of ensuring safety and security; we provide support to our customers for training. In addition, our solutions are reviewed by workplace safety officers within the customer’s organization to ensure compliance to safety protocols.

And finally, there should always be manual, hardware-based fail-safe overrides for critical functions. We design our voice control solutions to support existing and/or new manual overrides as deemed necessary for worker safety.

MD: What does the future of speech recognition hold? What applications are likely to gain ground?

VT: From a technological point of view, we are seeing considerable progress in the development of low-footprint, edge-based speech recognition solutions. Contrary to voice assistants such as Alexa and Google Assistant, these edge-based solutions process all the speech data on the device itself, and as such don’t need to send the user’s voice data to a cloud server to process. One key advantage of processing data offline is that it preserves users’ privacy and confidentiality of sensitive information.

Working without depending on an internet connection also helps with creating systems with high reliability and very low response time. This also enables developing speech recognition solutions for novel use cases where consistent internet connectivity cannot be guaranteed. Fluent.ai is leading these innovations by developing speech recognition systems that can run on tiny, embedded devices.

From an applications point of view, the world has grown increasingly sensitive to high-touch surfaces—for example, elevators, factory settings, ticket vending machines, etc. —since the COVID-19 pandemic began. Applications of speech recognition that help reduce the need for people to interact with high-touch surfaces will continue to grow in the coming years.

Speech recognition in homes is a consistently growing market as consumers become more comfortable using voice in a home setting. However, more and more people are becoming wary of the privacy concerns associated with internet-connected voice assistants. This is pushing many companies to explore offline, cloud-free speech recognition solutions. Smart appliances that can provide a voice-based user interface powered by an offline solution is a market that will grow tremendously in the coming years.

Finally, factory settings are of course still one of the most exciting and high-impact application areas for speech recognition technology. Speech recognition as a tool to increase time savings and production efficiency while also improving worker ergonomics will be an integral part of the factory of the future.

About the Author

Rehana Begg

Editor-in-Chief, Machine Design

As Machine Design’s content lead, Rehana Begg is tasked with elevating the voice of the design and multi-disciplinary engineer in the face of digital transformation and engineering innovation. Begg has more than 24 years of editorial experience and has spent the past decade in the trenches of industrial manufacturing, focusing on new technologies, manufacturing innovation and business. Her B2B career has taken her from corporate boardrooms to plant floors and underground mining stopes, covering everything from automation & IIoT, robotics, mechanical design and additive manufacturing to plant operations, maintenance, reliability and continuous improvement. Begg holds an MBA, a Master of Journalism degree, and a BA (Hons.) in Political Science. She is committed to lifelong learning and feeds her passion for innovation in publishing, transparent science and clear communication by attending relevant conferences and seminars/workshops.

Follow Rehana Begg via the following social media handles:

X: @rehanabegg

LinkedIn: @rehanabegg and @MachineDesign

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: