Four Steps to Successful AI Use in Manufacturing

Manufacturers can benefit from AI in a variety of ways, such as improving production, quality control and efficiency. Although AI offers manufacturers several new applications, to get the most value from it, companies must use it in the whole manufacturing process.

This means manufacturing engineers need to focus on four key aspects of AI—data preparation, modeling, simulation and test, and deployment—to successfully use it in a nonstop manufacturing process.

No Need to be an AI Expert

Engineers may expect it will take a considerable amount of time to develop AI models, but this is often not true. Modeling is an important step in the workflow process, but not the end goal. For successful AI use, determining any issues at the start of the process is key. This lets engineers know which aspects of the workflow to focus time and resources on to get the best results.

There are two points to consider when discussing the workflow:

- The manufacturing system is large and complex, and AI is only a piece of it. As such, AI needs to work with all the other working parts of the manufacturing line and in all scenarios. A part of this is using industrial communication protocols such as OPC UA, as well as other pieces of machine software such as controls, supervisory logic and HMI to collecting data from sensors on equipment.

- In this scenario, engineers are already set up for success when incorporating AI as they already know the equipment regardless of whether they have extensive AI experience. In other words, if they are not AI experts, they still can leverage their expertise to successfully add AI to the workflow.

The AI-Driven Workflow

There are four steps to building an AI-driven workflow:

Data preparation. Projects are more likely to fail when there is no good data to train the AI model. So, data preparation is crucial. Bad data can leave engineers wasting hours figuring out why the model won’t work.

Training the model is often the most time-consuming step, but it is an important one. Engineers should start with as much clean, labeled data as possible and focus on the data being fed into the model rather than focusing on improving the model.

For example, engineers should concentrate on pre-processing and ensuring data fed into the model is correctly labeled rather than tweaking parameters and fine-tuning the model. This ensures data is understood and processed by the model.

Another challenge is posed by the differences between machine operators and machine builders. The former typically have access to the equipment’s operation while the latter need that data to train AI models. To make sure machine builders share data with machine operators—their customers—the two should develop agreements and business models that govern that sharing.

READ MORE: Leveraging Artificial Intelligence to Reduce Plastic Waste

Caterpillar, a construction equipment manufacturer, provides a good example of the importance of data preparation. It collects large volumes of field data which, while necessary for accurate AI modeling, can mean time intensive data cleaning and labeling. The company has found success in streamlining the process by using MATLAB. It helps the company develop clean, labeled data that can then fed into machine-learning models to leverage strong insights from field machinery. Additionally, the process is scalable and flexible for users who have domain expertise but aren’t AI experts.

AI modeling. This stage starts after data is clean and properly labeled. In effect, it’s when the model learns from the data. Engineers will know they have a successful modeling stage when they have an accurate and robust model that can make intelligent decisions based on the input. This stage also requires that engineers use machine learning, deep learning, or a combination of the two when deciding which result will be the most accurate.

For the modeling stage, regardless of whether deep learning or machine learning models are used, it is important to have access to several algorithms for AI workflows, such as classification, prediction, and regression. As a starting point, a variety of prebuilt models made by the broader community can be helpful. Engineers can also use flexible tools such as MATLAB and Simulink.

It’s important to note that although algorithms and prebuilt models are a good start, engineers should find the most efficient path to their specific objective by using the algorithms and examples of others in their field. That’s why MATLAB offers hundreds of different examples for building AI models that span many domains.

Another aspect to consider is that tracking changes and recording training iterations is crucial. Tools such as Experiment Manager can help with this by explaining the parameters that lead to the most accurate model and reproducible results.

READ MORE: Human Intelligence Still Comes First

Simulate and test. This step ensures an AI model is working correctly. AI models act as part of a larger system and need to work with every other piece in the system. For example, in manufacturing, an AI model might support predictive maintenance, dynamic trajectory planning or visual quality inspection.

The rest of the machine software includes controls, supervisory logic and other components. Simulation and testing let engineers know that parts of the model work as expected, both by themselves and with other systems. Only when it can be proven that the model works as intended, and with enough validity to reduce risks, should the model be used in the real world.

No matter the situation, the model must respond the way it is supposed to. There are several questions engineers should ask at this stage before the model is used:

- Does the model have a high level of accuracy?

- In each scenario, is the model performing as anticipated?

- Are all edge cases being covered?

Tools such as Simulink let engineers check that the model works as programmed for each anticipated case before being used on the equipment. This helps avoid spending time and money on redesigns. These tools also help establishing a high level of trust by successfully simulating and testing expected cases for the model and confirming it meets expected goals.

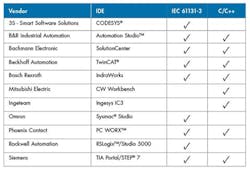

Deployment. Getting the model ready in the language that it will use is the next step once it is ready to deploy. For this, engineers typically need to share a model that is ready to go. This lets model fit into the designated control-hardware environment such as embedded controllers, PLCs, or edge devices. Flexible tools such as MATLAB can usually generate the final code in any type of scenario, offering engineers the ability to deploy a model across many different environments from various hardware vendors. They can do this without the extra work of rewriting the original code.

For example, when deploying a model directly to a PLC, automatic code generation eliminates rids coding errors that might be included during manual programming. This also provides optimized C/C++ or IEC 61131 code that will run efficiently on PLCs from major vendors.

READ MORE: AI Makes a Deep Impression on Industrial Manufacturing

Successfully deploying AI doesn’t require a data scientist or AI expert. However, there are critical resources that can help prepare engineers and their AI models for success. These include specific tools made for scientists and engineers, apps and functions to add AI into the workflow, various deployment options for nonstop operational use, and accessible experts ready to answer AI-related questions. Giving engineers the right resources to help successfully add AI will let them deliver the best results.

Philipp Wallner is the industry manager for industrial automation and machinery at MathWorks.

About the Author

Philipp Wallner

Industry Manager, MathWorks

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: