10 Considerations for Designing a Machine Vision System

Machine vision systems serve a vast range of industries and markets. They are used in factories, laboratories, studios, hospitals and inspection stations all over the world—and even on other planets. But how do you design one?

When designing a machine vision system there are many factors that can affect the overall performance. Many of these elements are integral to the camera choice, but there are additional external factors that can have a significant impact on the final image. This article will explore 10 of these considerations and what to look out for when painting the full picture that makes up a vision system.

1. Environment

Images are captured in every corner of the world. In a corporate or residential building, it is common to see security systems, and while driving there can be toll booths with embedded systems and small board-level modules connected to aerial imaging drones.

The range of environments that require reliable imaging solutions is broad, and while these systems are often generalized as machine vision systems, it’s clear that imaging solutions extend well beyond factory floor applications.

The conditions a vision system operates within determine many of the specifications necessary to deliver the required image, including weather conditions such as direct sunlight, rain, snow, heat and other external factors that are outside our scope of control. However, a vision system can be designed with these in consideration. Factors such as additional light can be included in a system, or adequate housing to ensure the camera and its sensor are protected from harsh weather. In short, systems can be adapted to ensure that a camera always has a clear image.

2. Sensor

When deciding on a camera for a vision system, most of its performance resides with the image sensor. Understanding what a camera is capable of fundamentally comes down to the type of sensor being used. On the other hand, two different cameras with the same sensor are not necessarily going to output the same type of image. In fact, they most likely will have some noticeable differences. Therefore, looking at the rest of these considerations is quite important.

The format of the sensor will decide a lot about the matching optics and how the images will look. Formats abound, but some common ones include APS-C, 1.1-in., 1-in. and 2/3-in. When using a larger sensor size, a vision system can often benefit from more pixels, resulting in a higher resolution image. However, there are several other specifications that are equally important. Details such as full well capacity, quantum efficiency and shutter type all play a part in how the sensor can deal with various targets in unique situations.

READ MORE: Designing a Turnkey Vision-Guided Bowl Feeder Cell

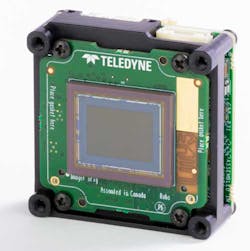

A Teledyne Lumenera Board-Level Camera with a 1.1-in. sensor.

3. Lens

After deciding on the internal aspects of a camera, a vision system needs some help focusing on a target that can only be accomplished with a lens. In machine vision systems the camera size can vary based on the application. With larger systems, a zoom lens may be required depending on the targeted image. With machine vision, many cameras are locked on a specific target area and take advantage of prime lenses with a fixed focal length.

Each lens has a specific mounting system based on the manufacturer and the sensor it will be attached to. Common lens mounts for machine vision include C-mount, CS-mount and M42-mount. Therefore, before choosing a lens, the first step is to review required sensor specifications.

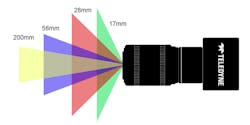

The main specification for a lens is the focal length. As focal length decreases, the field of view (FoV) inversely increases. This means that as the area the lens can capture increases, the magnification of each element decreases. Other specifications are also valuable to consider, such as working distance and aperture.

READ MORE: Design Insights: A Vision of the Future of Vision; Save on Motion Design TCO; IDEA! Awards a Valuable Innovation Showcase

A camera lens showing the difference in field of view based on the focal length.

4. Lighting

Arguably the most important piece of a vision system is the lighting. This is because no matter how sensitive a camera sensor is in low light, there is no substitute for the clarity obtained from a well-illuminated target. Lighting can also take many forms that can help reveal new information about a target.

Area lights are a more general-purpose solution for even distribution, so long as the target is a good distance from the source to prevent hot spots from occurring. Ring lights are useful when dealing with highly reflective surfaces since they are able to reduce reflections. Other lights include dome lights for machined metals and structured light for 3D object mapping; even introducing colored light can add details and increase contrast.

5. Filters

If there is excess unwanted light passing through the lens, it can reduce important detail. There are many kinds of filters that can be used to reduce and remove certain light. The two main kinds of color filters are dichroic and absorptive. The main difference between these is that dichroic filters are designed to reflect undesired wavelengths while absorptive filters absorb extra wavelengths to only transmit the ones required.

Filtering out color is not the only use for filters. Neutral Density (ND) filters reduce the overall light levels, whereas polarizers remove polarized light, which reduces reflected light. Antireflective (AR) coatings help reduce reflection within the vision system. This is particularly useful for applications such as intelligent traffic systems (ITS) where a reduction in glare can increase the accuracy of optical character recognition (OCR) software.

6. Frame Rate

The speed of a camera can be measured in frames per second (fps). A camera with a higher frame rate can capture more images. This also affects each image that is captured due to the exposure time of each image being reduced as the frame rate increases. This results in less blur as the camera captures fast-moving targets such as objects on a conveyor belt. The drawback to short exposures is the lack of time the sensor is able to collect light during each shot. In these cases, a larger pixel size for the sensor often helps increase the overall brightness of each image.

7. Noise and Gain

When a high frame rate is a must and short exposure can not be avoided, the camera gain can potentially make up for the reduced brightness. The reason why gain cannot be the easy solution for all lighting challenges is because of the noise that it introduces. As the gain is increased, so is the noise which reduces the clarity of an image. The increase in gain allows for the camera to increase the sensor sensitivity. This means the vision system can take in a brighter image with less light but also reduce clarity from read noise and dark current noise.

8. Bit Depth and Dynamic Range

To accurately measure certain targets, a vision system needs to have high enough bit depth. The higher the bit depth, the higher the degree of variance between pixels. On the other hand, the dynamic range represents the ability of a camera to make out details from the brightest sections of the image to the darkest.

In outdoor applications more than 8-bit is rarely needed unless there is a need for high-precision measurement like photogrammetry. However, outdoor imaging can benefit greatly from a high dynamic range by capturing data in bright sunlight such as the sky, which is often overexposed in many images, and capturing detail in the shadows of a target. One possible solution could be to increase the gain or exposure time, but this would only result in getting detail in the shadows while reducing the data in already bright sections. A high dynamic range can ensure that there is clarity in each part of the image.

9. Software

Even with high-end hardware, the camera can only do what the software demands. The fundamental forms of software components are image acquisition and control, along with image processing software. The primary source of image data comes from image acquisition and control software which takes raw data from the camera and interprets it for the end-user. One of the common ways this is done is when a color camera takes an image, the pixel data is filtered through a physical Bayer filter, and then the software takes that data to construct a color image.

The next stage in the software tree has to do with what is done with the image data. This can involve a variety of tasks for machine vision such as inspection, analysis and editing for applications such as quality control when a target passes by the camera and needs to be tested.

10. Interface

As camera technology continues to push forward and result in a vast amount of image data, it is important to develop methods for delivering that data. Camera interfaces have branched out in several ways to provide a range of options for any imaging application. The four most common solutions are USB3, GigE, CoaXpress (CXP) and Camera Link High Speed (CLHS). The main attributes to consider when looking into a vision system interface are the required bandwidth, synchronization, ease of deployment and cable length.

READ MORE: Automate 2022: Machine Vision Solutions Point to the Future of Automation

A Teledyne DALSA Genie Nano with a GigE interface.

Putting it All Together

There are certainly many considerations involved when building a machine vision system, which is why many companies turn to systems integrators to help them with this task. System integrators, in turn, rely on high-performance OEM components that deliver the results. The key is to define what you need your vision system to do, and then identify the elements of the system that can produce the desired results.

Filip Szymanski, a technical content specialist for Teledyne Vision Solutions, is one of Teledyne’s imaging experts whose technology perspective comes from his degree in photonics and laser technology. He has years of experience in the machine vision industry working with cameras, sensors and embedded systems.