Making Conversation: Using AI to Extract Intel from Industrial Machinery and Equipment

What if your machine could talk? This is the question Ron Di Carlantonio has grappled with since he founded iNAGO 1998.

Back in 2000, the Tokyo/Toronto-based computer scientist launched netpeople, a platform that allows companies to create intelligent assistants for their products.

For a simple description of how netpeople functions, Di Carlantonio will prompt you to tap into your childhood memories of KITT, the talking, bulletproof car in “Knight Rider” (either the 1982 or 2008 versions would suffice) that can think, learn, communicate and interact with humans.

The platform, explained Di Carlantonio, is an “advanced intuitive conversational assistant” for automotive, consumer electronics and AI-driven manufacturing solutions.

A Talking Car

But rather than develop it for series production, the vehicle was earmarked to attract OEM contracts. More than 50 suppliers teamed up for the largest industrial collaboration in Canadian history, and following its launch earlier this year, the prototype currently tours auto shows and makes its rounds at manufacturing trade shows.

The brief to collaborating partners in the Canadian ecosystem (including Geotab, Denso, Aisin, ABC Technologies and Vehiqilla) was to create a cockpit for the concept vehicle named Project Arrow. iNAGO would leverage its netpeople assistant platform and the company’s conversational AI technology to enable a natural interface to everything in the vehicle.

“We created this open platform for everybody to work on and can create new innovations,” Di Carlantonio said. “Project Arrow became a concept of an intelligent cockpit in the vehicle, driven by an intelligent assistant and a slew of other technologies working together.”

The general use of AI-based solutions in the automotive industry stretches across the lifecycle of a vehicle, from design and manufacturing to sales and aftermarket care. AI-powered chatbots, in particular, deliver instant, personalized virtual driver assistance, are on call 27/7 and can evolve with the preferences of tech-savvy drivers.

Di Carlantonio now sees an opportunity to extend the use of the intelligent assistant platform to the smart factory by making industrial equipment—CNC machines, presses, conveyors, industrial robots—talk.

Intelligent Assistants

Before OpenAI’s large-language model-based chatbot ChatGPT became a sensation in 2022, there were several incumbent voice-activated command systems. ELIZA, a natural language processing program written in the 1960s, was the first chatbot. Others followed, “including the chatbots on your bank site that don’t answer anything,” said Di Carlantonio.

Building on what came before, iNAGO’s mission is to bring an intelligent, conversational assistant to market—one that captures contextual data and information that enables the use of smart components, navigation systems and troubleshooting services.

READ MORE: Erik Schluntz Calls Out Four Tech Trends Transforming Robotics and AI in 2023

iNAGO’s part in the communication stream is to make sense of the data in the context of what is being said. When humans process communication, we have to understand what the audio is. “In in other words, I can hear you through my ear and that becomes text,” explained Di Carlantonio. “That text gathers a meaning. We understand what the meaning of that text is, and then we go looking in our brain for some knowledge, and we pull that out. And then we decide how to answer.

iNAGO does not develop speech recognition (the ears), Di Carlantonio clarified. That part is often done by companies like Google, Nuance, Cerence and others. Instead, the patent-pending solution comes into play after the speech has been recognized. It analyzes the text, makes sense of it and then determines what the correct response is. “We then provide tools to allow anybody—non-programmers—to be able to create that knowledge and create an experience,” he added.

Context-Aware Natural Language Understanding

To appreciate iNAGO’s unique capability to comprehend the context of a prompt, consider the task of asking Alexa or Google a question. Unless the question is formulated in one statement, the response is generally limited and one is unable to ask a follow-up question or add more information. To do so, explained Di Carlantonio, one needs to understand the context of the conversation. In computer science parlance, the neural networks and architecture aim to solve sequence-to-sequence tasks while handling long-range dependencies with ease. Few have had success at doing this; ChatGPT, however, is showing measured success at completing the task.

To say the technology is “context aware,” explained DiCarlantonio, is to assert it understands all of the things being talked about (the conversational context) within the setting or environment. “If you were in a car, and you’re going 150 Km an hour, and the person next to you says, ‘what are you doing?’, it has a very specific meaning because of the context, not just because of the words,” he explained. “What we’ve tried to do is develop technologies that will understand the context and help better bridge that gap when conversing with a human.”

A robotic arm picking and placing a product or a lathe cutting a part has a very specific function, and getting the context right in adding a conversational prompt to respond in a specific manner has significant implications. “Let’s just say it’s one level more serious than a chatbot on a bank site,” said Di Carlantonio. “If the chatbot on a bank site doesn’t get it right, you go to a call center. If a robot doesn’t get it right, you could cut somebody’s arm off. So, the safety element is critical and communication has to be accurate, and has the obligation to be both valuable and safe. The level of communication is much, much higher, and much more complex.”

AI Opportunities in Manufacturing

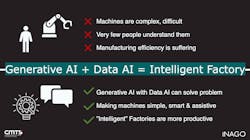

Relative to other industries, manufacturing has focused on hardware and has a long way to go to keep pace with state-of-the-art software technology implementations, said Di Carlantonio.

Over the past couple of years iNAGO has worked on ways to install its solution in machines. The experience has revealed a number of opportunities, he noted. Firstly, in spite of the ruggedized design of displays on industrial machines, they present limitations on who can program the machine or understand the data on the display, he said. No-code/low-code capabilities have opened up an ability to update solutions that are better aligned with the changing needs of the workforce.

“Today, you have one person—the expert—who programs it. You’re basically tied to what he can do or she can do, and you’re limited,” Di Carlantonio pointed out. “What if everybody on the plant floor could tell the machine what to do, and what if a designer could communicate with the machine to tell it the part it wants to make, and it could be conversational?”

READ MORE: Four Steps to Successful AI Use in Manufacturing

Secondly, interpreting the sheer amount of data a machine generates is a burden for the average person. “It’s just too complex,” he said. AI can instantaneously gather all of that data, and process it to determine improvements and patterns.

Thirdly, despite the confluence of knowledge that humans hold about their machines in plants, the industry faces one reality: “People are older and they’re retiring, so no more experts,” pointed out Di Carlantonio. “The person leaves and all that knowledge leaves the organization.” “So how can we retain that knowledge and make it available to everybody, even if they just started last week? That’s an opportunity I think this technology can solve.”

Undocumented Gap Between Machine Makers and Machine Operators

iNAGO’s work with its R&D partners revealed there is a gap between makers of machines and users at the plant. The designers and machine makers don’t know what is happening with a machine at the plant level, and the plant is very busy and doesn’t have time to gather information, said Di Carlantonio. This exposes an inefficiency in developing solutions that keep pace with production and operational needs.

“Basically, the plant knows the capabilities, but they have no idea what a user would do with a machine, other than what the machine maker has said it could do,” Di Carlantonio clarified.

Di Carlantonio characterized the work of figuring out how to best enable machines to “talk” as being in a “discovery phase” as they try to learn optimal ways to give people the means to interact with machines and allow manufacturers to capture and understand what’s going on at the plant level.

“The work we’re doing right now is, how do we take that knowledge or information we know about machines and put it in these AI models that allow people to interact with them in a natural way?” he asked.

This phase would be augmented by learning the problems users encounter with machines that are not contained in the operating manuals, as well as simplifying the programming so that it becomes more accessible to a larger group of users.

Natural Language Understanding

For iNAGO, the ongoing focus will be to work through the challenges of turning information into an AI solution that users can interact with in a natural way. “That’s a challenge we’ve been trying to solve for six years, so we are very close to that challenge,” Di Carlantonio said. “We can take a manual or a specification, and using AI, we can convert it into something that you can just ask questions and get information.”

Another challenge is that experts on the plant floor “hold a lot of information in their heads and they don’t have it down on paper,” he said. “Or, if they do, it’s not the easiest to understand a document. So how do we get those people to share in this and be involved, and how can we take their knowledge into the AI solution?”

READ MORE: How Voxel Combines Video Feeds and AI to Assess and Mitigate Workplace Safety

Finally, the hurdle of figuring out the business model remains. “Who’s going to pay?”

With ChatGPT, Microsoft paid a billion dollars in investment in their first investment to create their tool, reminded Di Carlantonio. “Well, nobody in manufacturing is going to invest a billion dollars to do this kind of thing. We need to gradually bring it in. And we need to figure out the right business model that’s a win for everybody.”

To this end, Di Carlantonio is working with companies to bring his solution to fruition: “We believe the people who will pay will be at the plant level. So, at the very end [a] customer would pay for a service to improve their productivity and efficiency. And probably companies like us, the tech companies at the end, will be at the very bottom, but we will be providing that technology to allow the current manufacturers to incorporate the solution into their technology, and then, provide it as a service to the end customer. So that is the challenge as we get through every stage and show the ROI.”

About the Author

Rehana Begg

Editor-in-Chief, Machine Design

As Machine Design’s content lead, Rehana Begg is tasked with elevating the voice of the design and multi-disciplinary engineer in the face of digital transformation and engineering innovation. Begg has more than 24 years of editorial experience and has spent the past decade in the trenches of industrial manufacturing, focusing on new technologies, manufacturing innovation and business. Her B2B career has taken her from corporate boardrooms to plant floors and underground mining stopes, covering everything from automation & IIoT, robotics, mechanical design and additive manufacturing to plant operations, maintenance, reliability and continuous improvement. Begg holds an MBA, a Master of Journalism degree, and a BA (Hons.) in Political Science. She is committed to lifelong learning and feeds her passion for innovation in publishing, transparent science and clear communication by attending relevant conferences and seminars/workshops.

Follow Rehana Begg via the following social media handles:

X: @rehanabegg

LinkedIn: @rehanabegg and @MachineDesign

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: